Concept | Introduction to machine learning#

Watch the video

In this lesson, we’ll take a brief look at machine learning in the context of data science, including:

What’s machine learning (or ML).

The purpose of ML.

ML patterns and rules.

Let’s start with data science#

Data science brings together methods like statistics, mathematics and business domain knowledge, so that when organizations use data science, they can find ways to solve many problems and improve their services and products.

Data science teams across industries use algorithms and statistical methods to get insights from the various data sources available to them.

These data sources can be:

Structured, such as a spreadsheet, or

Unstructured, such as an image or a tweet. These data sources might include things like social comments and shares, weather data, or economic data such as the job or housing market.

Machine learning algorithms#

Machine learning algorithms were built to mimic the way that the human brain works. The basic idea behind an algorithm is to:

Give it enough information so that it learns the way humans do, without being explicitly programmed

Improve its learning over time, in an automated way.

It learns from patterns, historical records and events, similar to the way that humans learn.

Humans make decisions based on past experiences, such as feeling pain when touching a hot surface, reading about lions and knowing they can attack humans, and hearing a loud noise and seeing a car crash.

A computer can be programmed to implement algorithms that output decisions or predictions much faster than humans can, giving us information that we can then use to help solve real world problems.

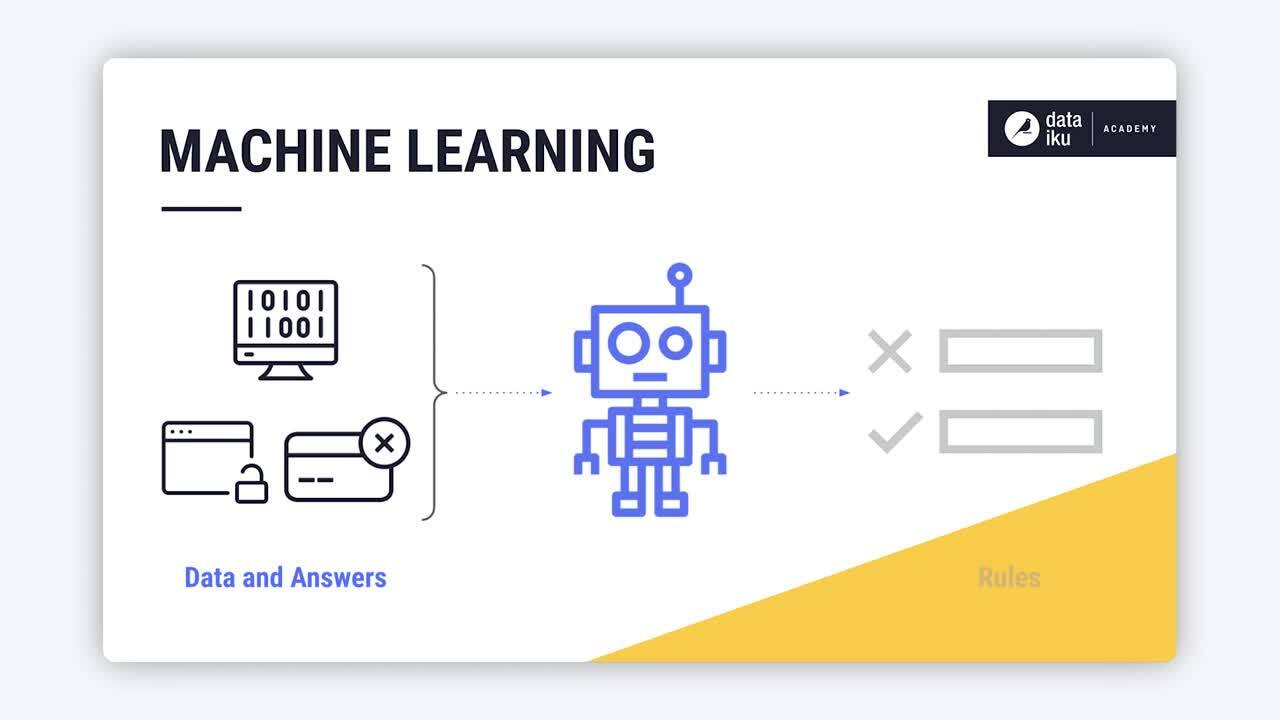

Before machine learning, traditional computer programming performed actions based on conditional statements such as If, Then, Else rules. As a result, to build a system that accepts or declines a credit card, for example, traditional programming would require long hours and thousands of lines of code. For this reason, having computers imitate human thinking and automatically improve their learning over time is an attractive option.

A machine learns when we provide data, such as past experiences and answers, as inputs. It can then look at patterns in the data to learn rules. These rules form the basis of algorithms.

Algorithms are the core of a machine learning model and are made up of sets of rules that a computer can follow.

Prediction algorithm#

A common type of machine learning algorithm is prediction where we want to know an outcome y given an input x. A prediction algorithm works by taking sufficient inputs x and sufficient known outcomes, y, and mapping them. The goal is to find a relationship between x and y that will allow a machine learning model to determine the values of y from the values of x.

This relationship between x and y can be linear or non-linear.

Linear relationship between input (x) and outcome (y)#

In the case of a linear relationship, the algorithm ends up determining the location of a straight line through the plotted points, such that the distance between the plotted points and the line, also known as the error, is minimized.

The equation of a simple linear regression line is y = mx + b, where:

The constant value m is the slope or gradient of the line.

The constant b is the y-intercept value.

When the algorithm determines the values of the slope and intercept, then it’s said to be trained on the data.

The algorithm can then take in new data where only the values of x are known, and use these values to predict the values of y.

Non-linear relationship between input (x) and outcome (y)#

The graph of a linear relationship is a straight line, because the changes in y are constant for each change in x.

The graph of a non-linear relationship is curved, because the changes in y aren’t constant for each change in x.

In our simple linear regression example, for every change in the number of study hours, there is a constant change in the outcome or score. However, if we used the exam time itself as the predictor of the exam outcome, the graph would not be linear, it would be a curve that peaks and then drops as time increases due to factors such as test fatigue.

Examples of predictions use cases#

Businesses and organizations use machines to learn rules to help solve problems. Some examples are:

Predicting wellness, where a machine learns the specific symptoms that indicate whether a person is likely sick or not sick.

Predicting qualification, where a machine learns the specific financial information, personal information and account detail patterns that indicate a client is qualified for a loan.

Predicting purchase patterns, where a machine learns the specific purchases that indicate a customer belongs to a particular customer segment that might be more interested in product A than in product B.

Predicting sales, where a machine learns historical sales for years to predict future sales.

Understanding customers, where researchers use machine learning to classify sentiment, such as tweets, into positive or negative categories.

Predicting maintenance, where an automobile manufacture uses machine learning to predict upcoming maintenance needs, making manufacturing more efficient and less costly.

Supervised versus unsupervised learning#

To learn these patterns and rules, most machine learning techniques fall into one of two categories: supervised and unsupervised learning.

Supervised learning#

Supervised learning applies when each input has a corresponding output, and we want to find a mapping between the inputs and outputs in the data. This kind of data is also known as labeled data.

The algorithm will then use the labeled data to:

Create a mapping of input features to the known outputs.

Apply this mapping to unlabeled inputs to predict outputs, also known as predictions.

Throughout this course, we’ll refer to supervised learning as prediction.

Unsupervised learning#

In contrast, unsupervised learning applies when the input data is unlabelled. In other words, there is no known correct output, or label, associated with each observation used to train the model. The goal of unsupervised learning algorithm is to identify patterns, similarities, densities, and structure in the data.

Throughout this course, we’ll refer to this learning technique as clustering, which is the most common technique of unsupervised learning.

Note

New, unseen, data fed to the trained model, as part of a production workflow, is typically unlabeled. We apply our model to this unlabelled data to get predictions or identify patterns.

Next steps#

In this lesson, we looked at the concept of machine learning, its purpose and how a machine uses patterns to learn and identify rules.

In the next section, we’ll take a closer look at the concept of labeled and unlabeled data and how labeled data is used in supervised machine learning.