Concept | Managed folders#

Watch the video or read the summary below.

This article introduces managed folders in Dataiku.

It looks at:

Situations needing a managed folder.

How to use managed folders in the Flow.

How to interact with managed folders using the Dataiku APIs.

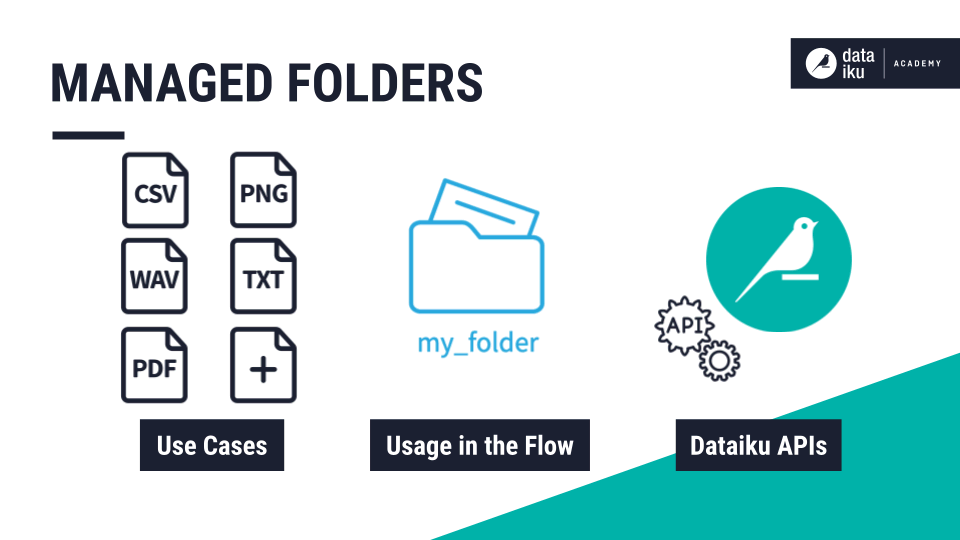

Use cases for managed folders#

Managed folders in a Flow allow coders to treat files simply as files. With the help of the Dataiku APIs, you can then programmatically manipulate these files as they normally would be.

This might be the case for a folder of CSV files, or any other supported data format. But it’s also of critical importance for handling non-supported data formats, such as images, audio, videos, or PDFs.

If you need to store and manipulate any type of data (supported or non-supported), then Dataiku offers unstructured storage handles in the form of managed folders.

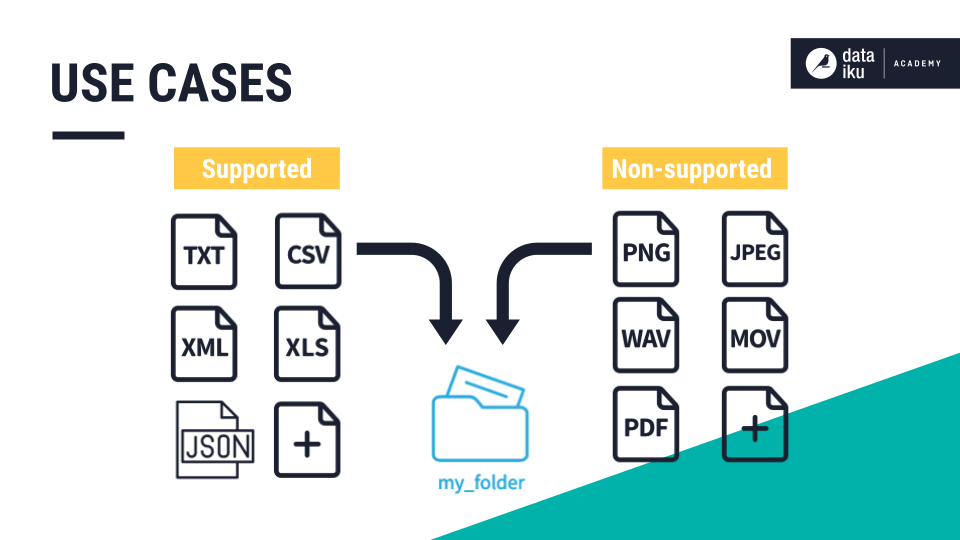

Usage in the Flow#

You can create a folder in the Flow from the new dataset menu and choose the option for a folder. After naming it, you can drag files into the folder.

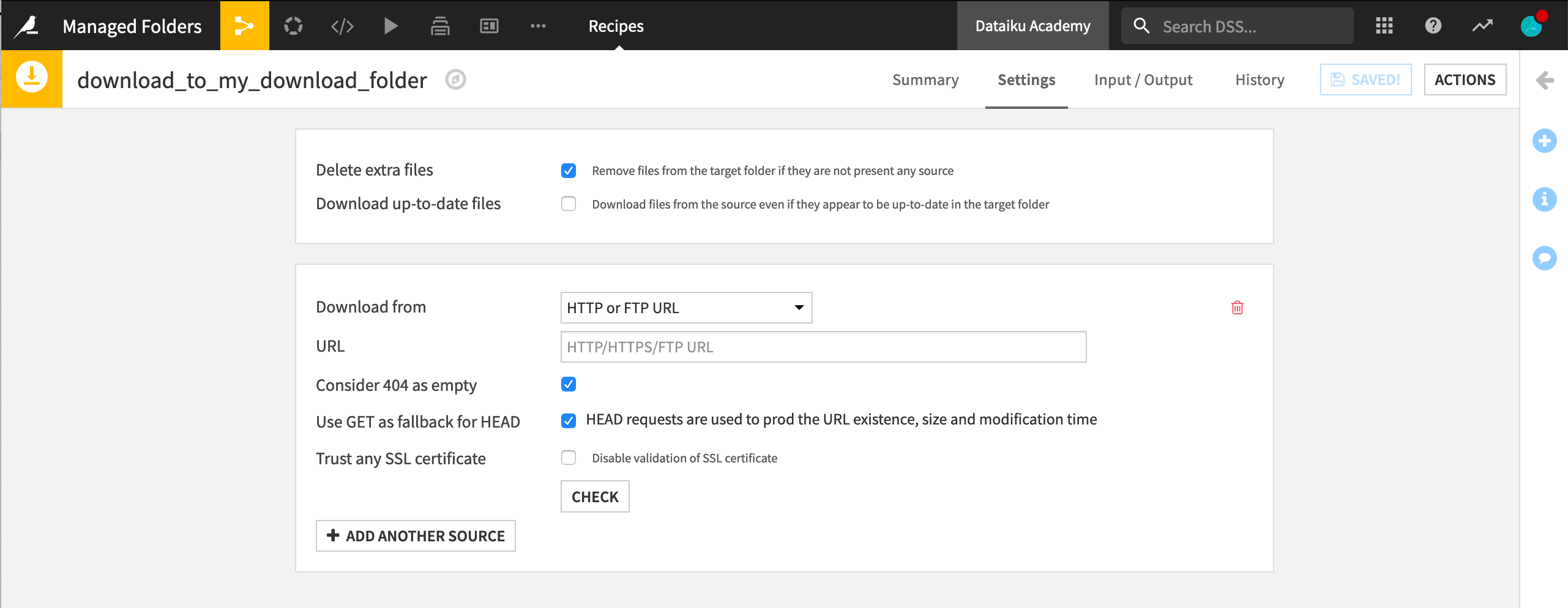

Alternatively, you can use a Download recipe, where you can provide the source and path to the file or files to be uploaded.

However, a managed folder most often stores files as the input, the output, or both, to a code recipe in the Flow. Declaring a folder as the input or output of a recipe is similar to choosing a dataset as the input or output of a recipe.

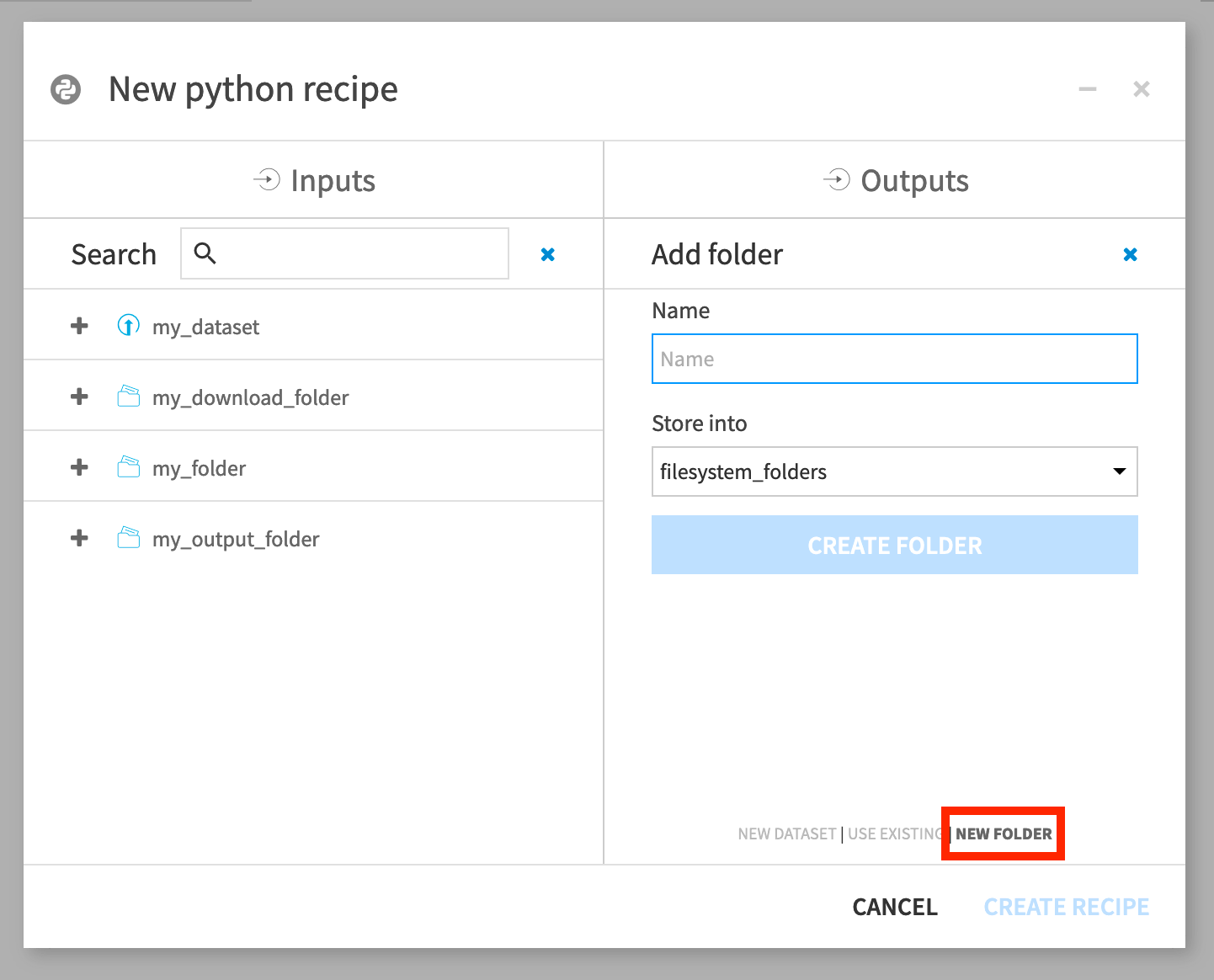

In the recipe creation dialog, you can choose a folder as input — just as you would do for a dataset. Likewise, you can create a new folder as output–just as you would do for a dataset output.

Folder location#

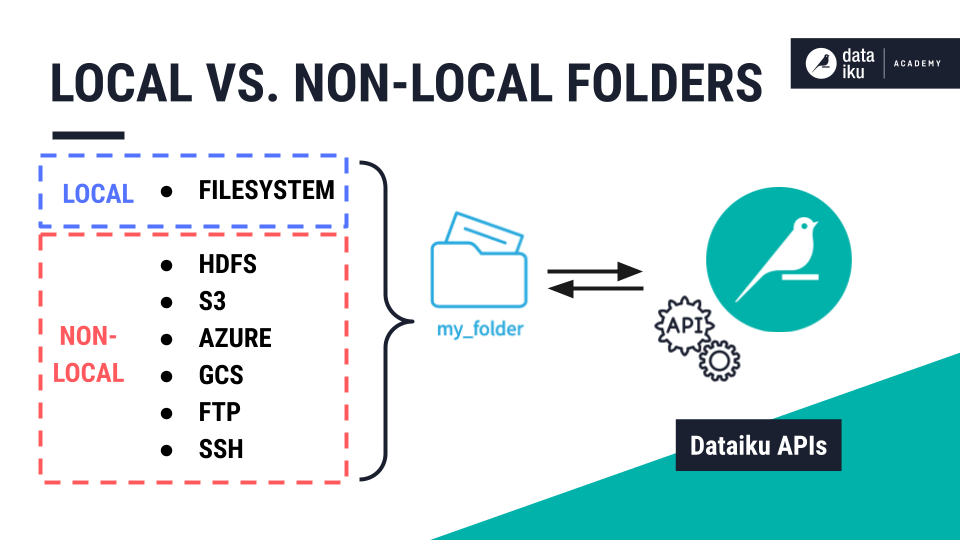

Similar to the options when the output is a dataset, you’ll have the option of choosing a storage location. With a folder though, that location must be a filesystem-like connection.

Examples of filesystem-like connections include the local filesystem, HDFS, S3, Azure, GCS, FTP, and SSH.

Dataiku considers managed folders that use the local filesystem as “local” and those that use an external connection as “non-local.”

Whether a folder is local or non-local has consequences for how to interact with it through the Dataiku API.

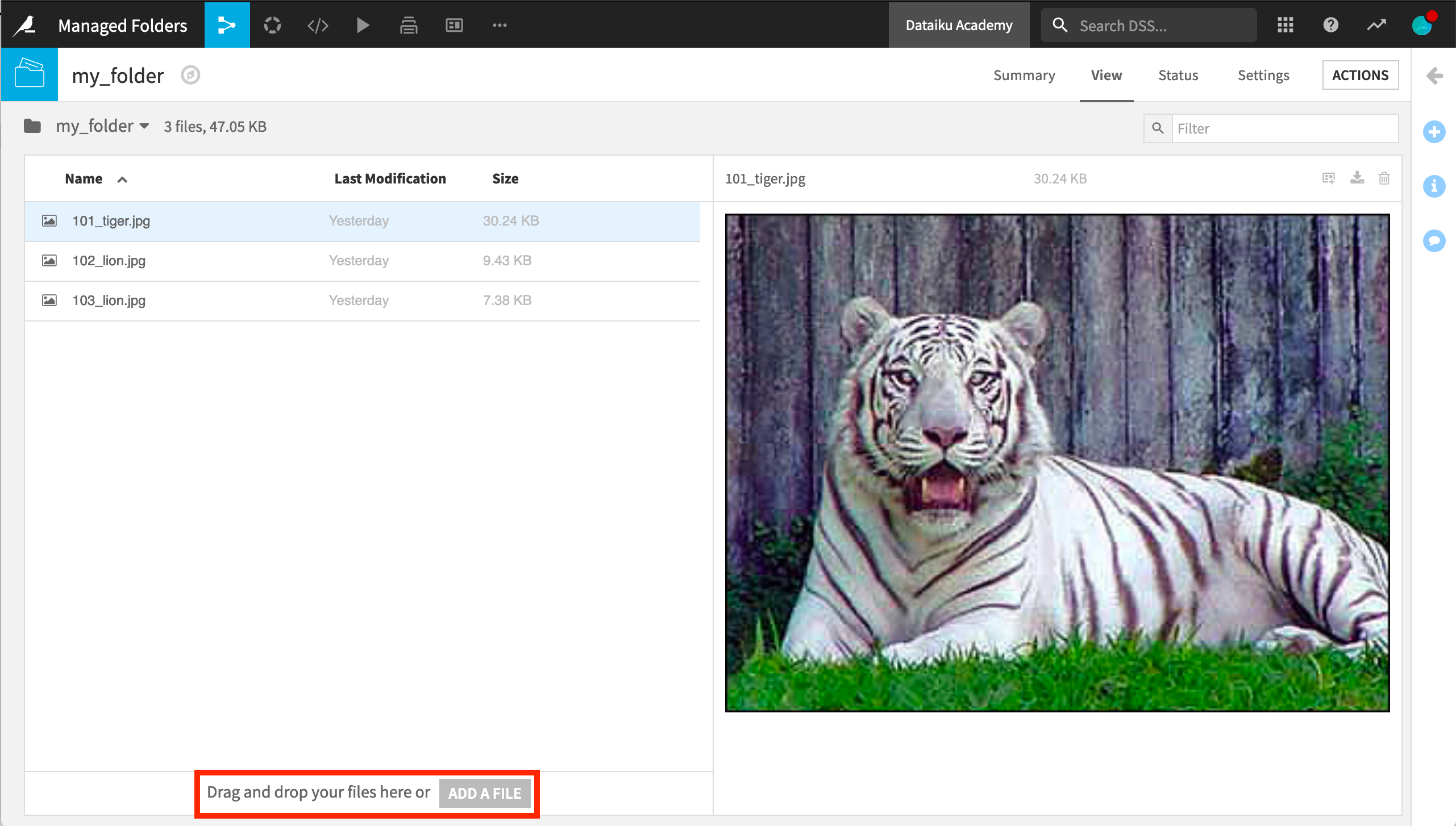

Folders + Dataiku API#

When a code recipe has either an input or output folder, the default code includes a unique ID to reference the folder. But you can also use your given folder name.

import dataiku

handle = dataiku.Folder("folder_name")

Reading and writing files to a managed folder in a streamed fashion is the recommended method for transferring data. For this, you can use Dataiku’s stream APIs.

Stream APIs can download and upload contents to a Dataiku managed folder when you can’t retrieve the relative path from the Dataiku API.

For example, to read a file from a folder as a stream of bytes, use the get_download_stream() method.

# read a file in a folder

with handle.get_download_stream("myinputfile.txt") as f:

data = f.read()

To write a file to a folder, use the upload_stream() method.

# copy a local file to a folder

with open("local_file_to_upload") as f:

folder.upload_stream("name_of_file_in_folder", f)

For the specific case of local folders, you could use the get_path() method to obtain the filesystem path to the folder.

# LOCAL FOLDERS ONLY

import dataiku, os.path

handle = dataiku.Folder("folder_name")

path = handle.get_path()

# read a file from a LOCAL folder

with open(os.path.join(path, "myinputfile.txt")) as f:

data = f.read()

However, using the stream APIs whenever possible is strongly encouraged because the path API won’t work for any folder on an external connection.

Next steps#

You can learn more in the reference documentation on Managed folders.

To leverage managed folders with the Dataiku API, see Managed folders in the Developer Guide.