Tutorial | Building your feature store in Dataiku#

In this article, you’ll follow step-by-step instructions to learn how to leverage Dataiku’s capabilities to implement a Feature Store approach.

Prerequisites#

Dataiku 11.0 or later.

A Full Designer user profile on the Dataiku for AI/ML or Enterprise AI packages.

Understand feature stores. To learn more about this concept, visit the reference documentation on Feature Store.

Be familiar with Dataiku topics particularly Designer and MLOps. To learn more about these topics, visit the Dataiku Academy learning paths Advanced Designer and MLOps Practitioner.

Note

While most of the capabilities mentioned in this article are available since Dataiku version 8.0, the concept of a centralized place to publish and search for Feature Groups is available in Dataiku version 11.0 or above. If you have an earlier version, you can use the Catalog as a centralized place to publish and search for Feature Groups.

You’ll need Dataiku version 11.0 or above to import the demo projects described in the instructions in this article.

Introduction#

Building a feature store isn’t a standalone project. It’s a way to organize your data projects to put in common as much as possible the arid work of building valuable datasets, with proper and useful features in them. These features can then be used to train your model and score the data in production.

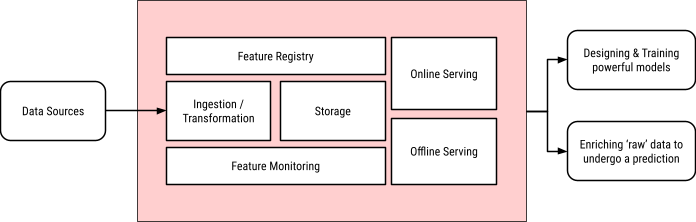

Here is a typical schematic of a feature store:

All these components are available in Dataiku, and so it’s a matter of understanding how to put them in motion, which is the goal of this article.

Feature Store Capability |

Dataiku Capability |

Related Documentation |

|---|---|---|

Ingestion / Transformation |

Flow with recipes |

|

Storage |

Datasets based on extensive Connections library |

|

Monitoring |

Metrics & Checks with Scenarios |

|

Registry |

Feature Store |

|

Offline serving |

DSS Automation Server, Join recipes |

|

Online serving |

DSS API nodes, especially enrichment capabilities |

Get started#

We will use a set of projects to showcase a typical setup. You can import these projects to a test instance to explore them.

FSUSERPROJECT.zip - Original project, without a feature store approach

FSFEATUREGROUP.zip - Dedicated project to build Feature Groups

FSUSERPROJECTWITHFEATURESTORE.zip - Revamped project using Feature Groups

Note

These projects have been built on Dataiku version 11.0. You’ll need Dataiku version 11.0 or above to import them.

They’re using mostly PostgreSQL connections, except for one that uses MongoDB (for import purposes, you can create a fake MongoDB connection).

The Reverse Geocoder plugin is required to compute data for the model. We don’t need this plugin for the feature store logic. This is purely for this precise project.

Be careful which order you import the projects: FSFEATUREGROUP needs to be imported before FSUSERPROJECTWITHFEATURESTORE.

Once imported, the output datasets of the FSFEATUREGROUP project won’t be published in your instance’s feature store. You need to do it manually (see below for more details). Similarly, the API endpoints used for online serving will be there, but not deployed to an API node.

The structure of this article is:

Understand the original standalone project.

Build a specific project to generate the feature groups, including feature monitoring and automated builds

Update the original project to use the new feature groups.

Let’s look at a standard project#

We’re starting this journey with a payment card authorization project. Let’s understand what we’ve achieved so far.

Note

The relevance of this project or the actual ML model used isn’t this article’s topic. We use it only as a practical support for the demonstration.

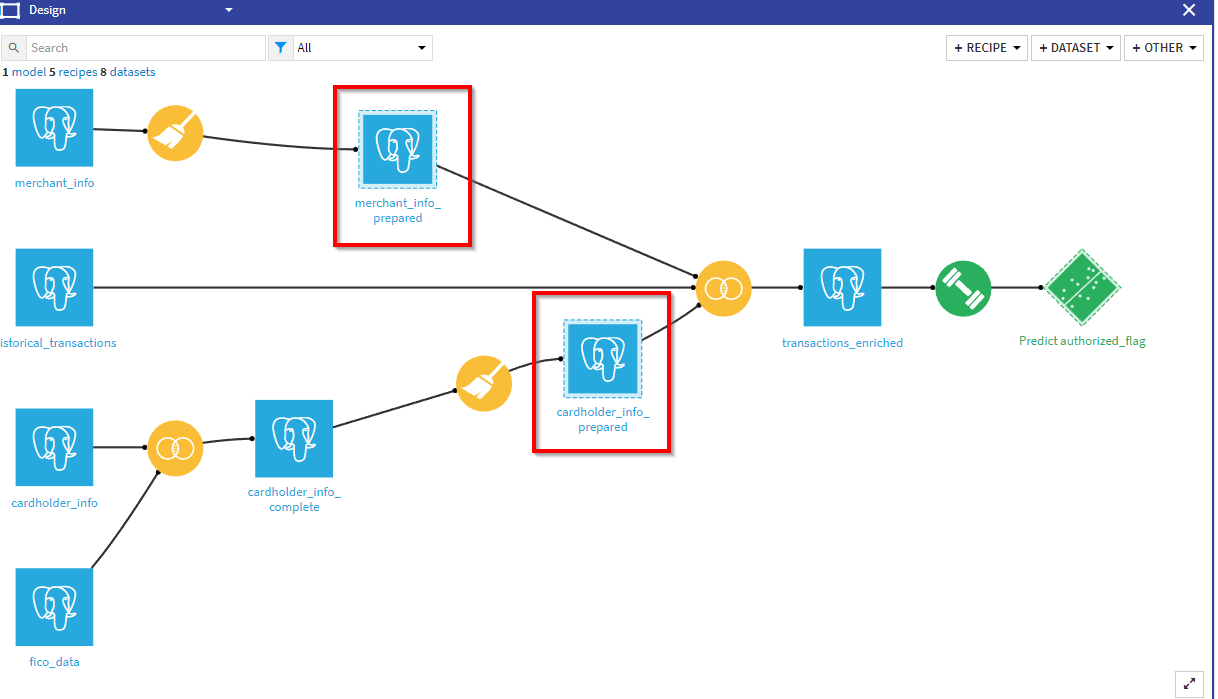

Flow overview#

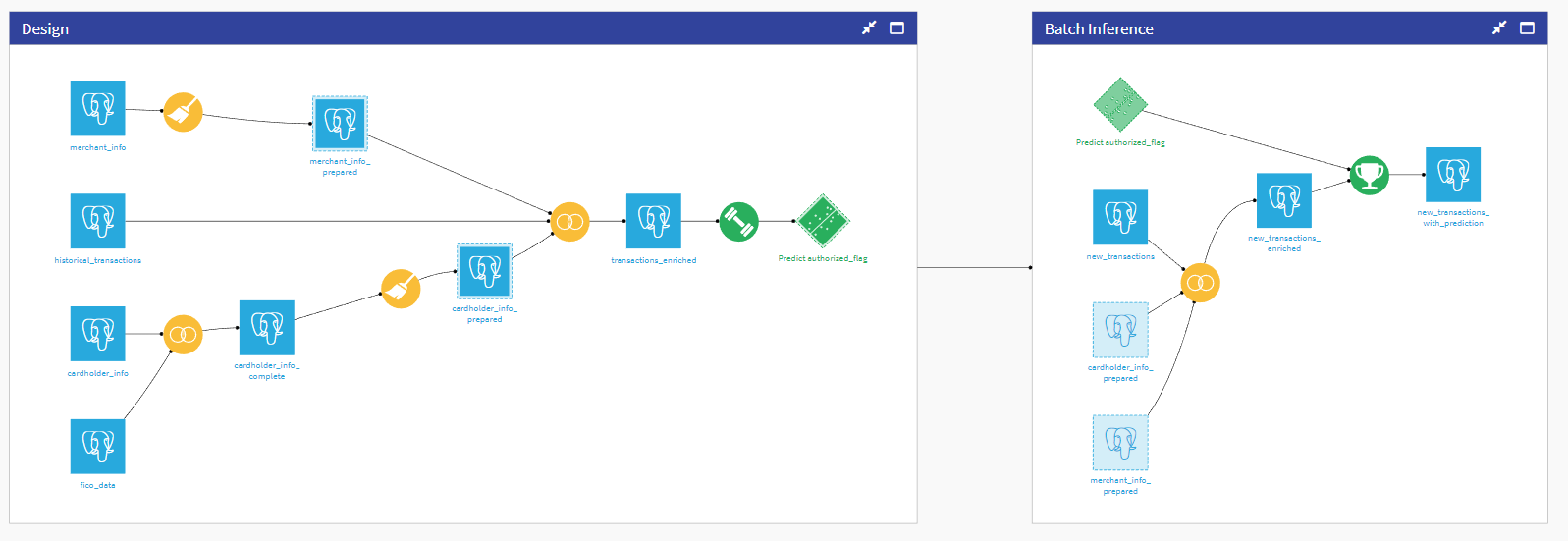

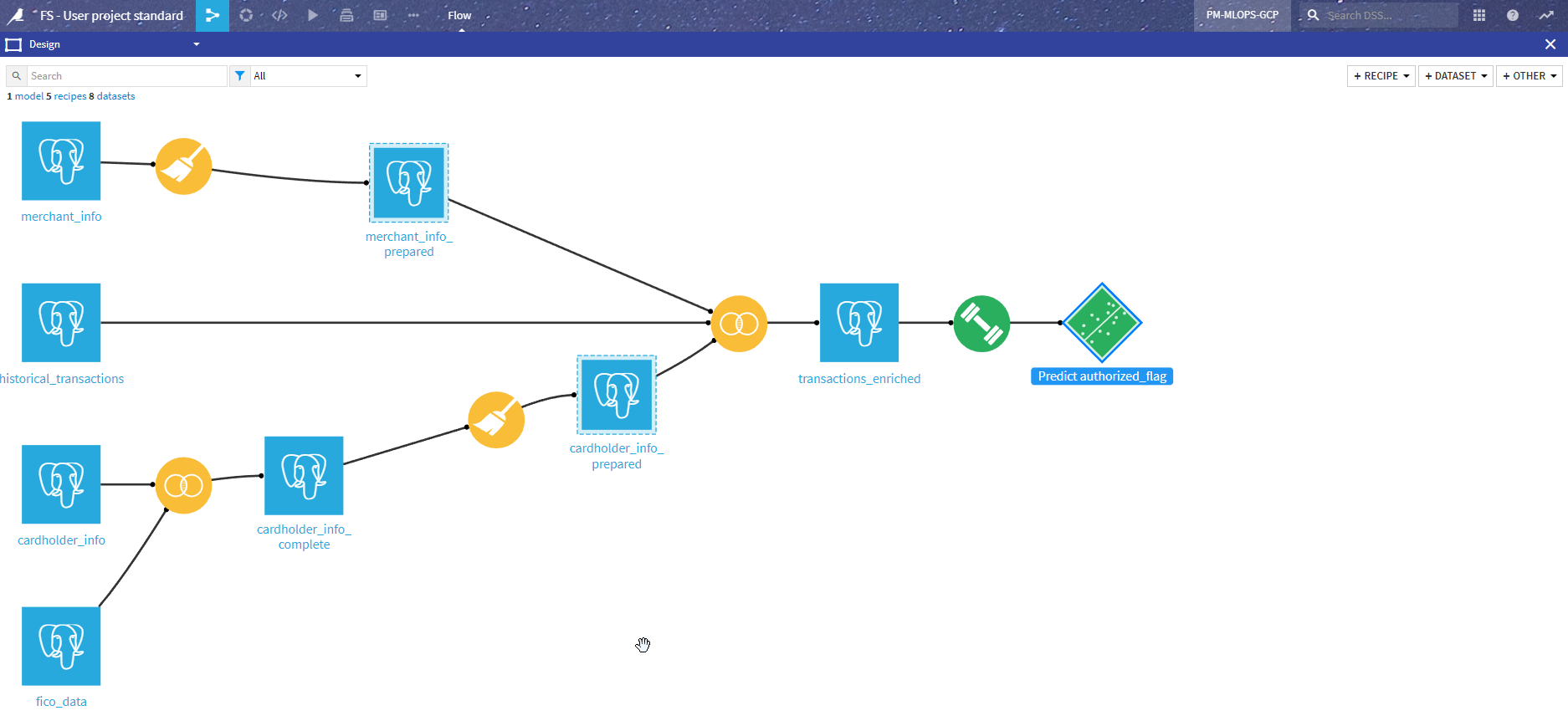

The Flow has been organized into two zones:

Design - This is where we fetch data from various sources, prepare it, and use it to design a model.

Inference - This is the actual batch Flow that takes a dataset with new un-predicted data and adds a prediction (This is the part that you automate with a scenario and run on your Automation node).

Let’s dive into each section a bit further.

Design#

We have four main sources of data:

The merchant data comes from the merchant_info dataset, and transformations are mostly on geo localization (both GeoPoint and name of state), resulting in merchant_info_prepared.

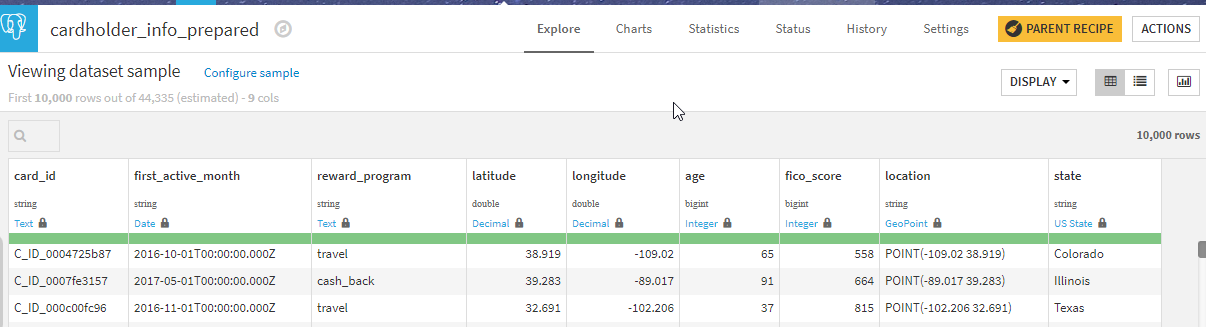

The cardholder data comes from the cardholder_info dataset. This is enriched by a Join recipe with FICO information (a risk score) from fico_data resulting in carholder_info_compute. Similar to merchant data, data preparation is mostly on geographical data, which produces cardholder_info_prepared.

The main Flow (historical_transactions dataset) is the historical data from past purchases with the resulting authorizations.

Those datasets are joined to produce the model input dataset transactions_enriched.

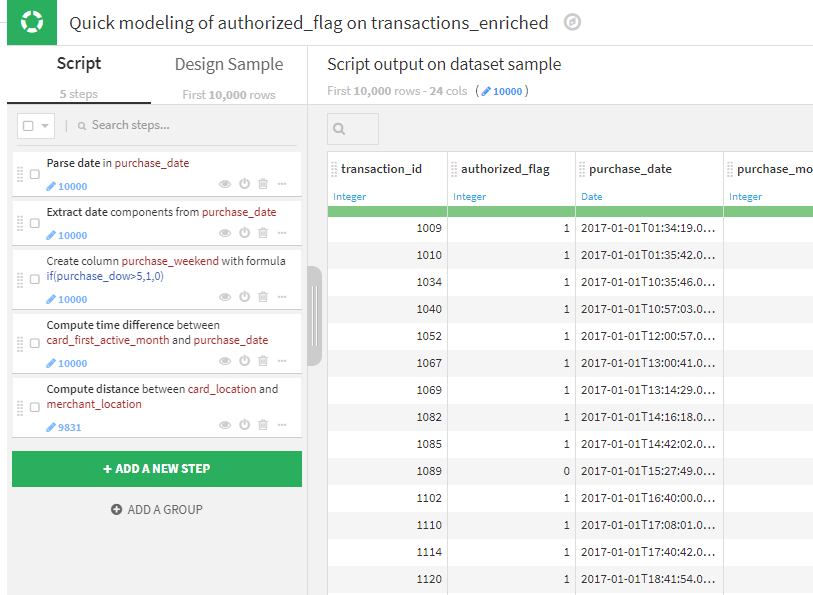

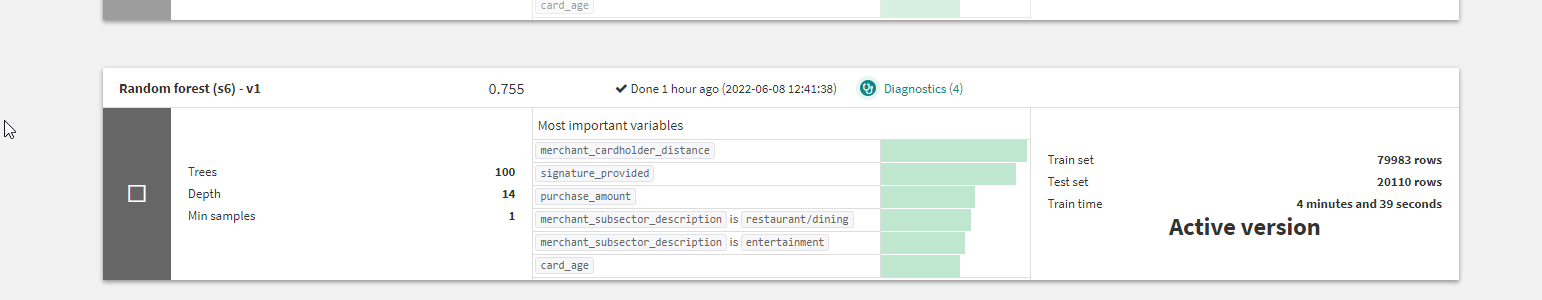

The model in itself isn’t complex. It’s worth noting that a last set of data transformations (pictured below) are performed in the model to compute some additional features. Adding these steps in the model will allow those transformations to be automatically integrated in the API package for online serving.

Then the model is trained and deployed.

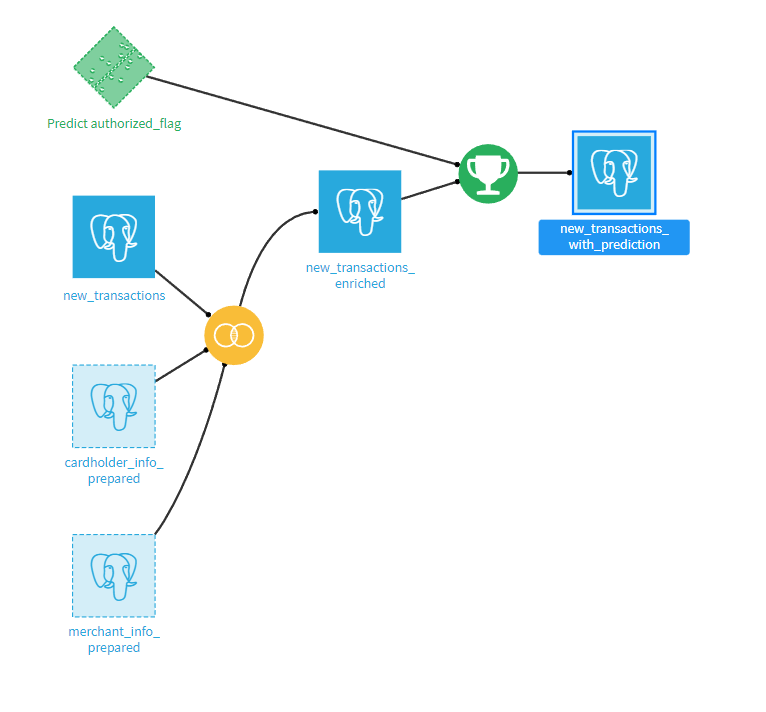

Batch inference#

This section is intended to be deployed as a bundle on an Automation node and automated with a scenario.

It takes all the new transactions, enriches them with the cardholder and merchant data (using the same dataset as in the Design section), and then scores using the deployed model.

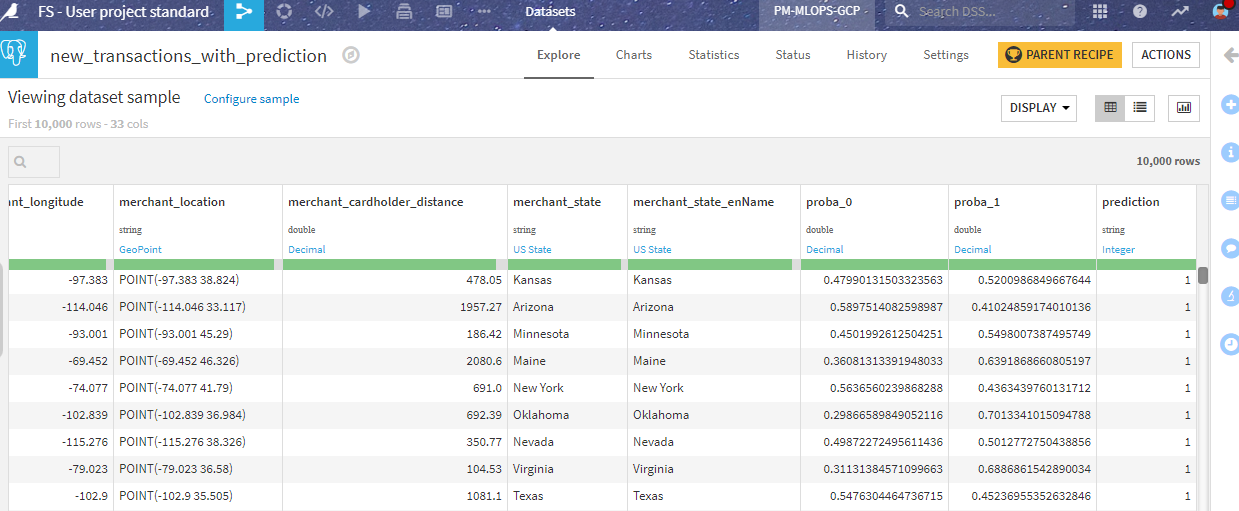

This produces an output dataset with the prediction:

Real-time inference#

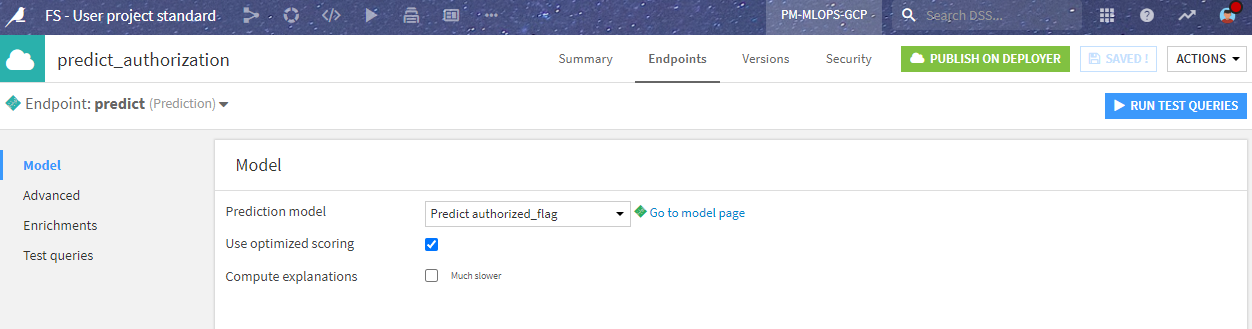

To use this model in various ways, we also deployed it as an API endpoint for real-time scoring. This is done by using the API Designer and defining a simple prediction endpoint with the saved model.

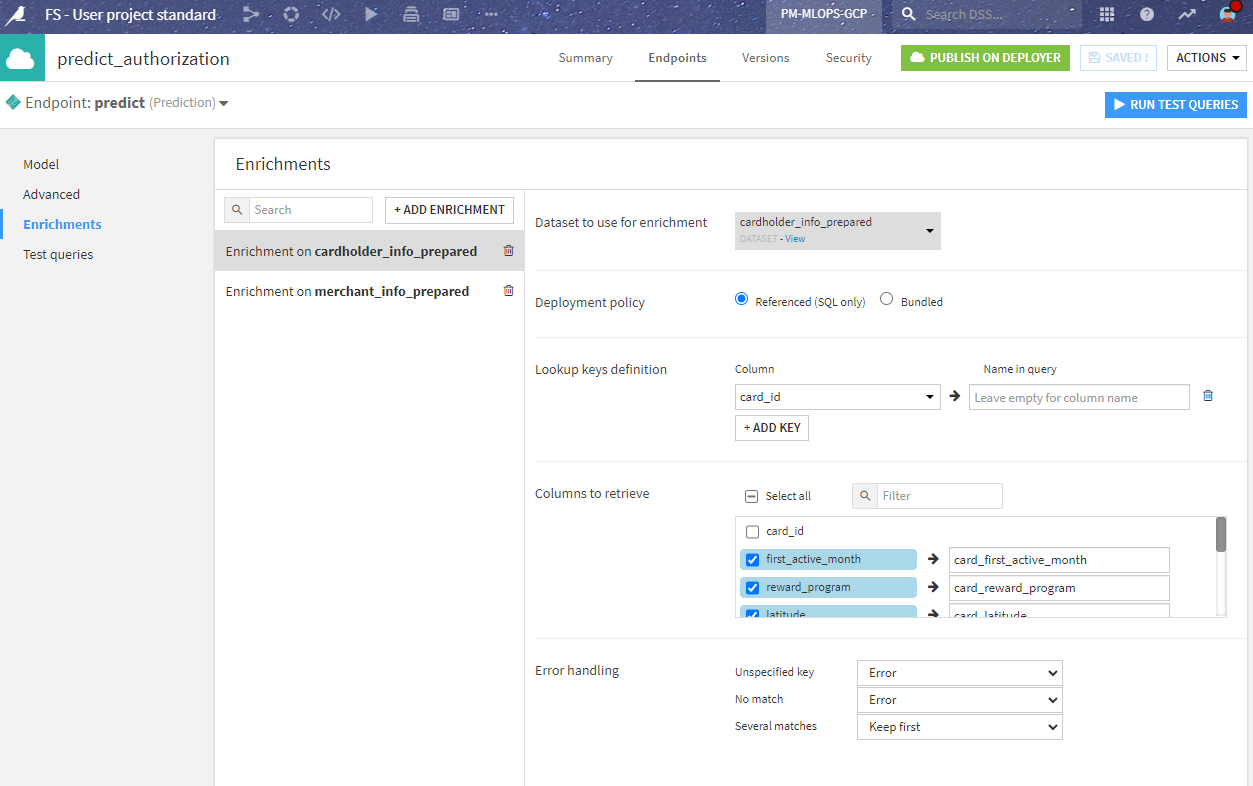

In the API definition, we have to consider that the third party will call the endpoint with only the new transaction data, not including the enrichments coming from the merchant_info and cardholder_info datasets.

This is solved in the endpoint definition where we tell the API node to enrich the incoming data directly from the SQL data source. This allows us to reproduce the Join in the batch inference phase in a simple and elegant way.

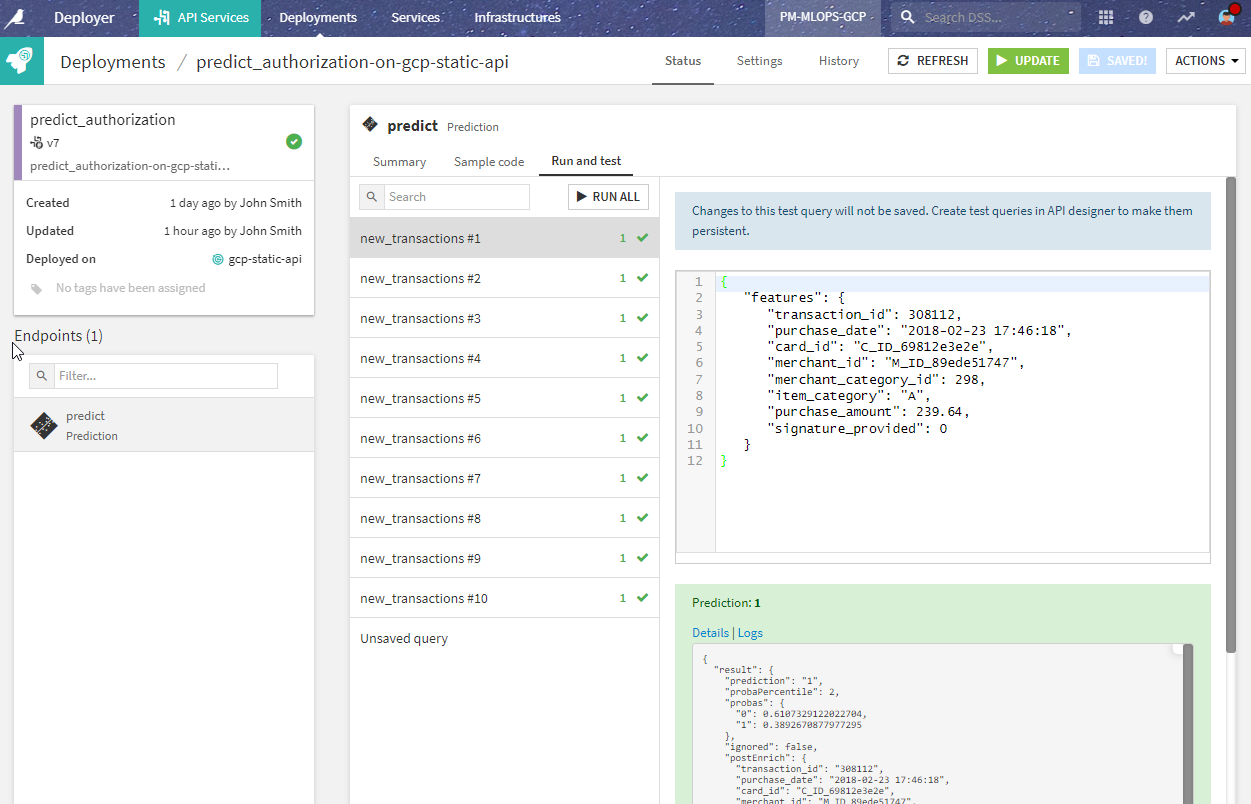

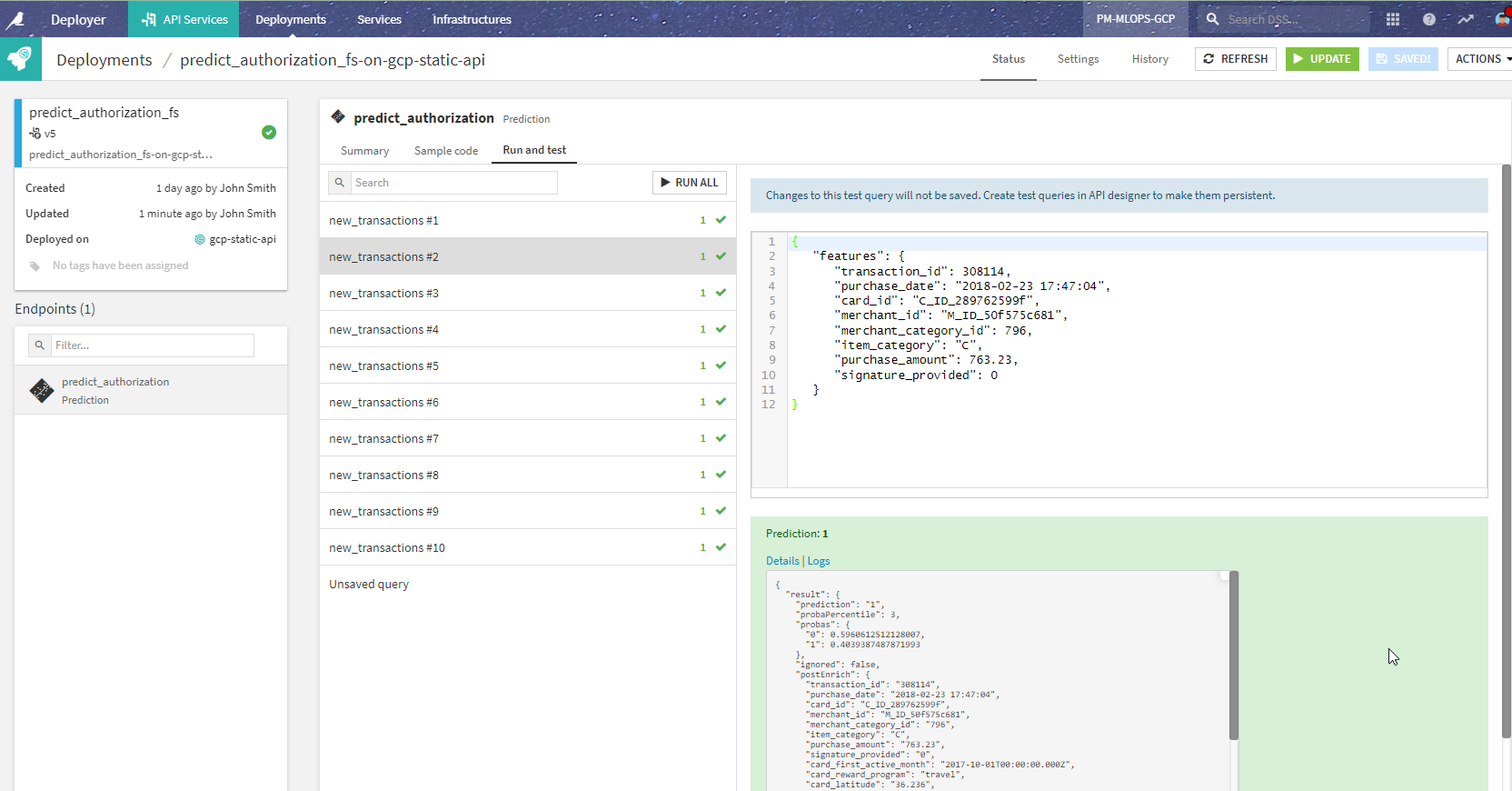

We then deploy the prediction endpoint to an API node using the API Deployer. Before doing so, we can test that the prediction endpoint is working correctly.

Potential issues#

This project is well designed and delivers the model to where it generates value. However, issues might arise from several angles:

Another project will (or may already!) use this same cardholder and merchant data. If the team members on this project aren’t the same, they will probably rework the same data preparation steps, doubling the work, and potentially introducing errors.

If there is a change in those inputs or preparation steps, this change may impact one project, but not the others.

Another team may manage the cardholder and merchant data, and they’d rather not see anyone wrangling it on their own, without knowing the data’s origin, quality, or limitation of use.

In the end, you will face the need for factoring and organizing the management of those datasets containing the features needed for your ML models. This is what a Feature Store is all about.

In the next section, we will see how to evolve this project to solve the problems mentioned above. Buckle up!

Feature group generation#

The first step is to consider that the computation of the enriched datasets, both for design and inference, needs to be done in a specific project, that may have different ownership.

With that in mind, we created a new project called Feature Group and moved the data processing steps to that project.

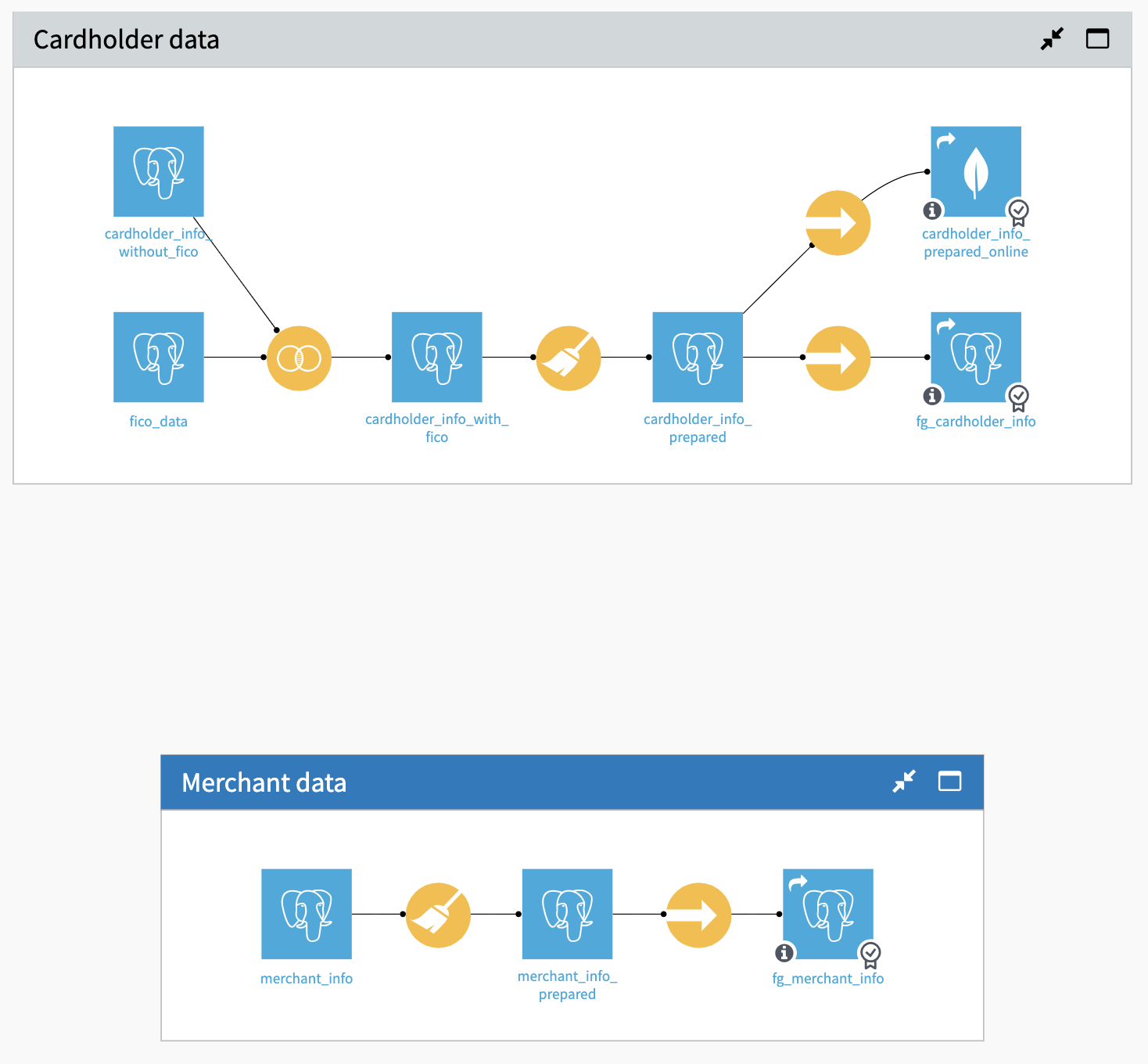

Building a feature group ingestion and transformation with the Flow#

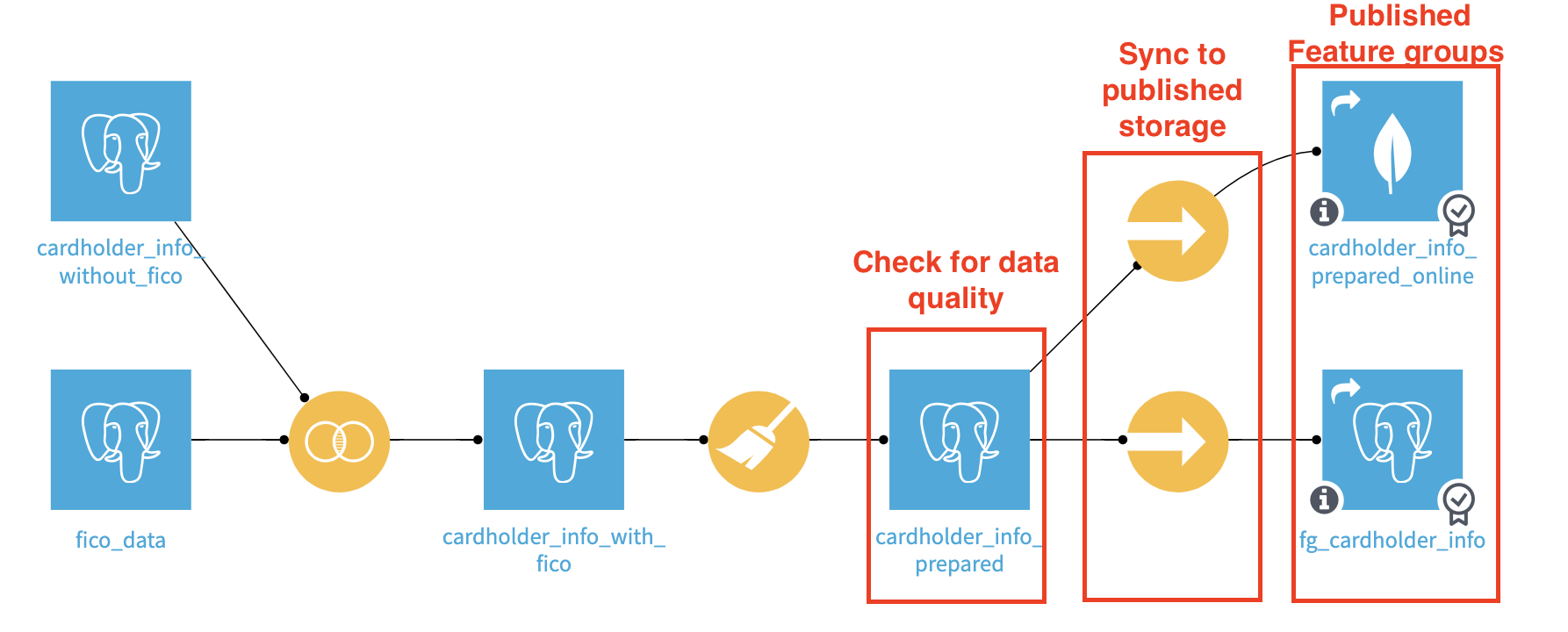

We haven’t changed the data processing part (That is the whole point). However, we’ve added some specific steps, let’s look into the details for the cardholder dataset.

The Sync recipes provide an opportunity to put data checks on the cardholder_info_prepared dataset. When automating the update with a scenario, we can then run the checks at this stage and not update the published feature group if its quality metrics aren’t good enough. After failing a check, updating the published feature group will require a fix or a manual run.

The Sync recipe isn’t mandatory, but allows us to automate the quality control of the carholder_info_prepared dataset and not update the final shared datasets in case of problems.

As an addition to the previous setup, you can configure the Sync to an alternative infrastructure for online serving. Even though PostgreSQL could handle the load in this example, here we’re pushing the data in a reading-optimized key-value database (MongoDB). This can be used for online serving typically.

Note

An alternative is to use a “bundled” enrichment on the API endpoint. Using this strategy requires setting up a local database on your API node, and Dataiku takes care of bundling the data from the Design node and inserting it into this local database. This ensures a fast response time, as explained in the reference documentation on bundled data.

Since we’re organizing the data preparation process, we take this as an opportunity to ensure high quality. This is especially important if many projects will use the resulting feature group.

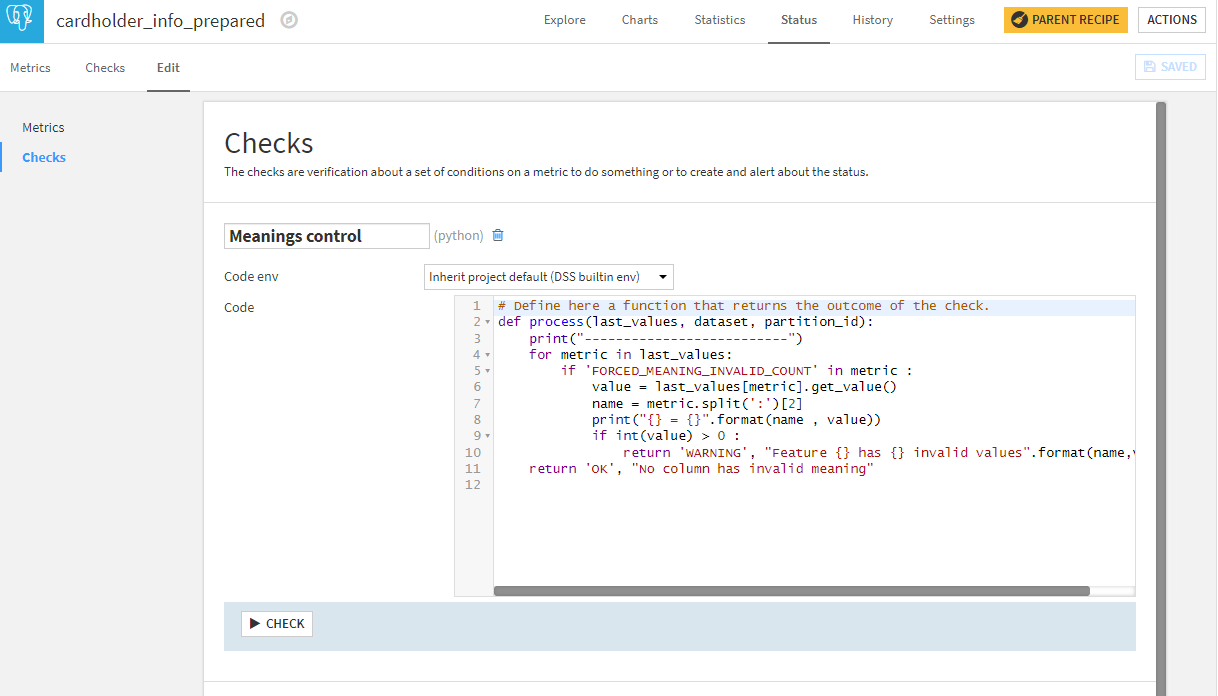

Monitoring feature groups with metrics & checks#

We want high-standard data quality for our feature group. To reach that level, the key capabilities to use are metrics and checks.

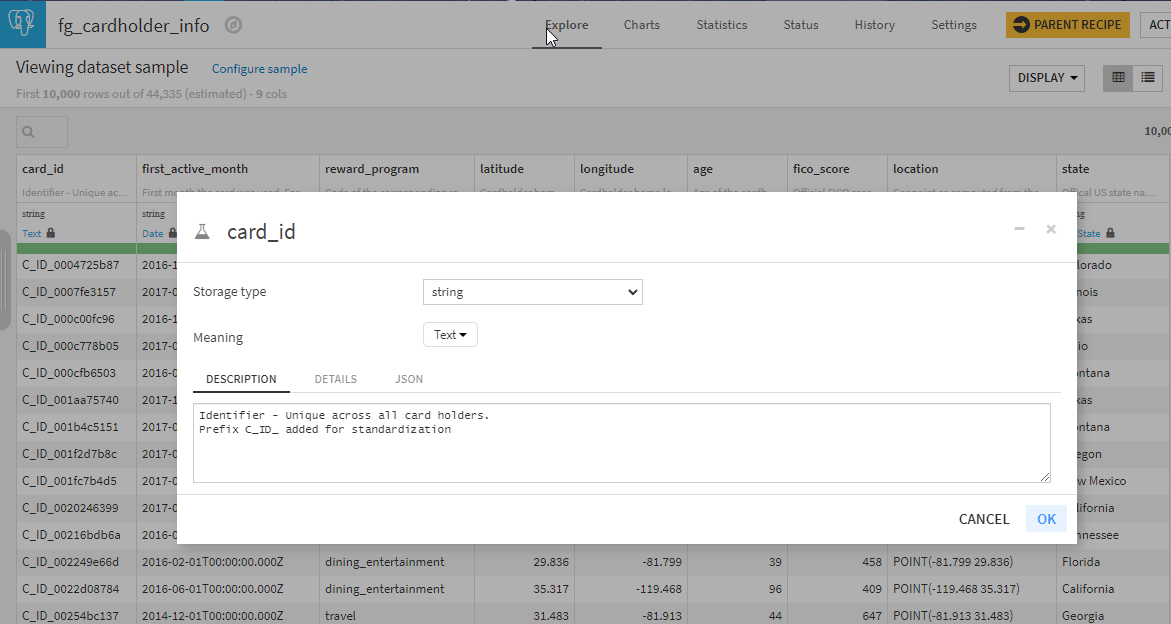

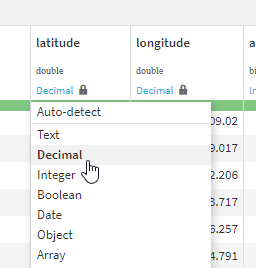

The first control we added is to clearly identify the schema and the meanings.

We did this by editing the column schema for each column and locking the meanings.

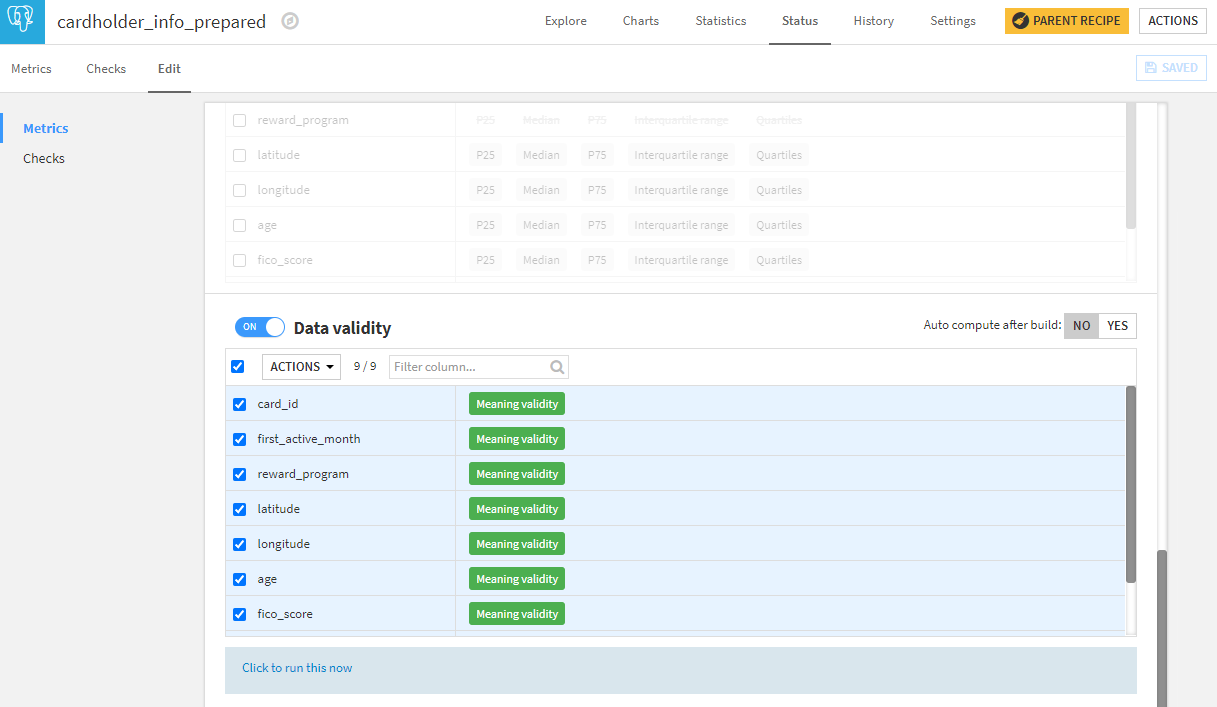

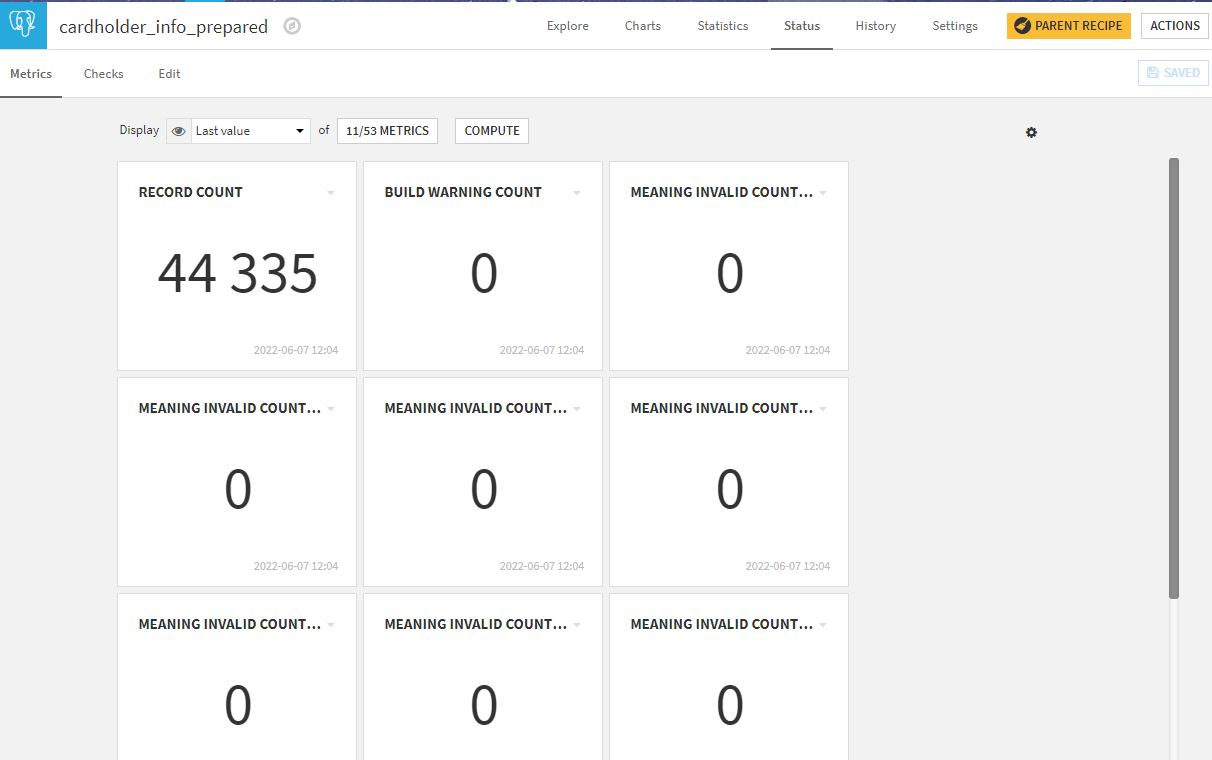

Then we added metrics, in this case on meaning validity. You can add more controls depending on your needs and time.

This provides more metrics on the dataset.

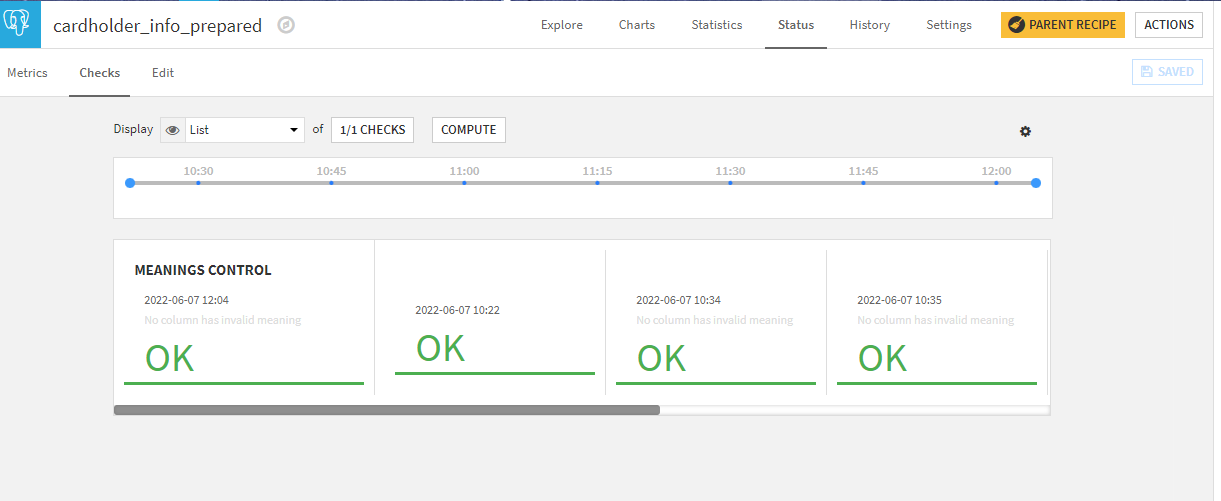

Finally, to leverage those metrics, we create checks. In this case, we created a custom Python check that will control all meaning metrics in one go and raise a warning if any are invalid.

In the end, that gives a single check on the dataset as to whether any column has an invalid meaning compared to the one we’ve locked.

With this check in place, we can use a scenario to automate the quality control of the dataset during updates.

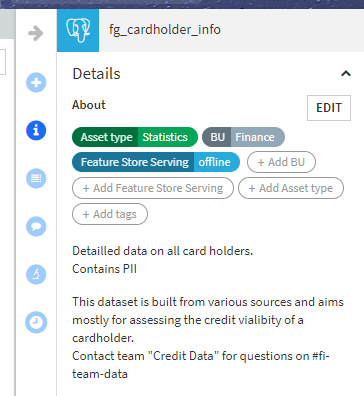

Promoting as a feature group#

Now that we have a well-documented dataset of good quality, we can promote it as a feature group.

Note

See How-To Feature Store for a more detailed overview of the mechanics of adding a dataset to the Feature Store.

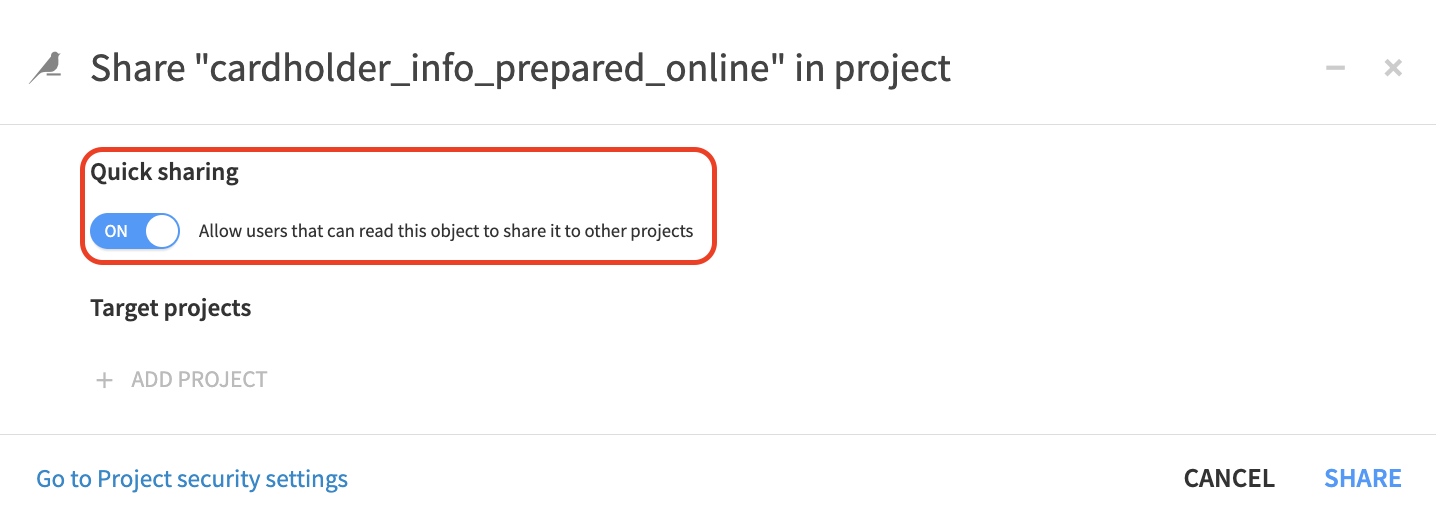

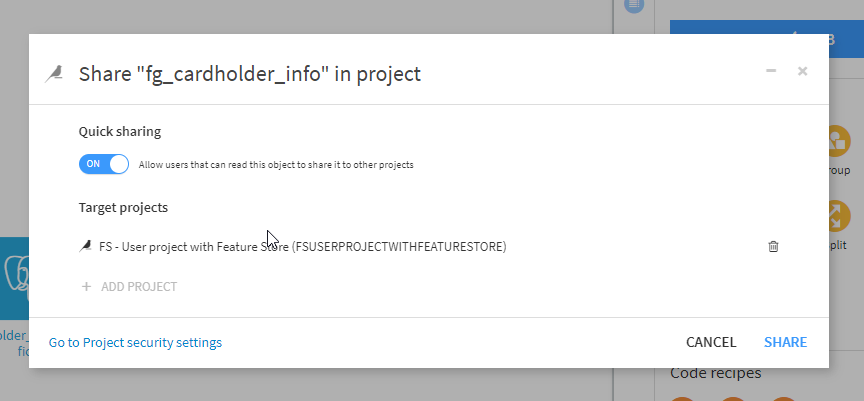

Because feature groups are intended to be reused in many places, we strongly recommend to facilitate the ability for users to get access to them. Your organization can achieve this goal by either by allowing self-service import using Quick sharing or to activate the request feature, allowing users to request and be granted access directly within Dataiku.

Note

Publishing to feature store and quick sharing are new capabilities of Dataiku version 11.0. If you have an earlier version of Dataiku, you can use the standard sharing mechanism and perform discovery with the Dataiku Catalog.

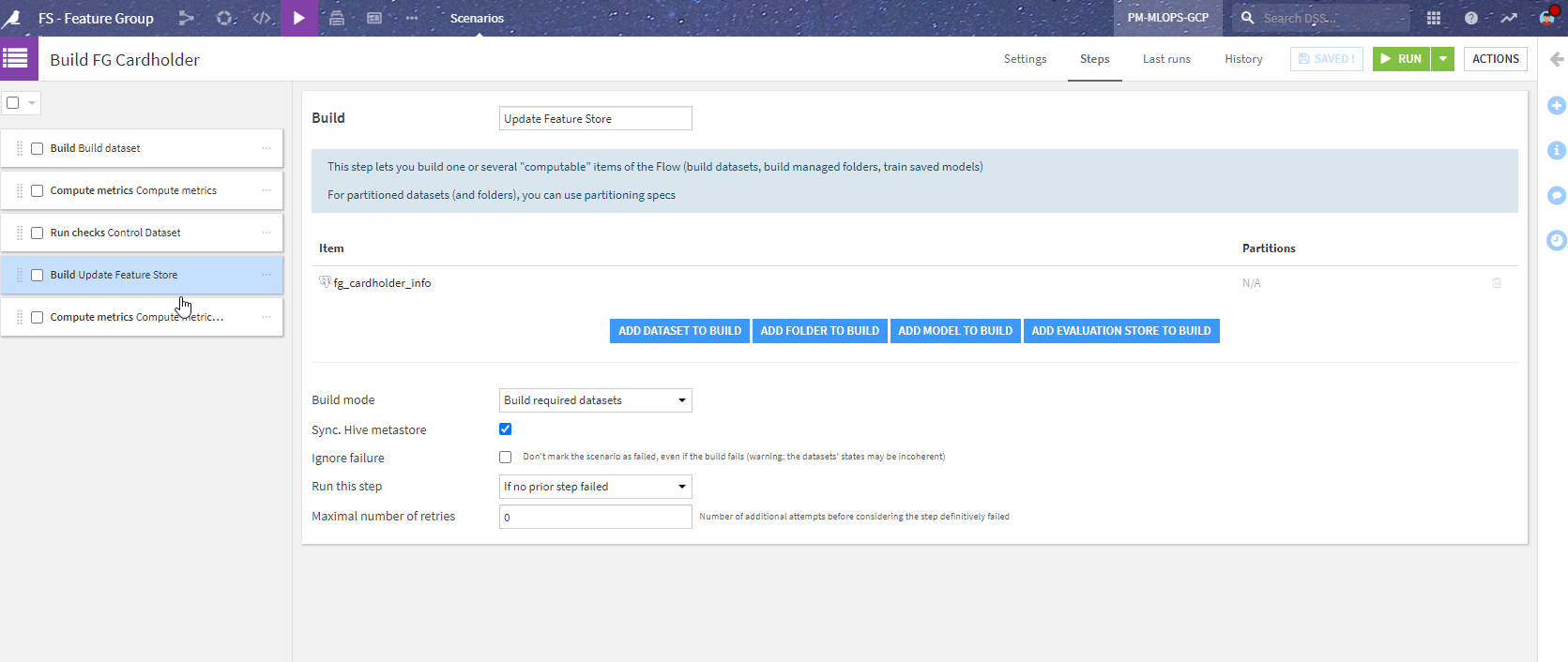

Automate feature group generation with scenarios#

Scenarios are a convenient way to automate the update of a feature group. You can keep full control over the building process by leaving it as a manual process. However, you can also automate it, leveraging the gateways put in place with the Sync step and check to make sure this automation stops in case of issues.

Here is an example of such a scenario:

To explain a bit more about the steps shown in the image above:

Build dataset - builds cardholder_info_prepared (the dataset before the Sync).

Compute metrics - computes the metrics on this dataset.

Control Dataset - runs the checks. This stops the scenario if an error in the check is encountered (so that the Feature Groups aren’t updated).

Update Feature store - runs the Sync recipes to the actual published Feature Group.

Compute metrics - computes the metrics on the Feature Groups.

With this scenario, we ensure that the data in the Feature Group is both up-to-date and of good quality. The last step is to add a trigger to the scenario. It could be, for example, a dataset modified trigger (on the initial datasets) or a time-trigger (every first day of the month for example). With a proper reporter in case of error to look at what’s wrong, this can all be automated.

Redesigning the project with feature groups#

Now that we have a specific project to create ready-to-use powerful feature groups, we can either revisit the original project to use them or create a new project with this new approach. Let’s see the different steps.

Adding a feature group to a project#

Note

The feature store section is only available starting with Dataiku version 11.0. If you have an earlier version, you can still perform this discovery within the Dataiku Catalog.

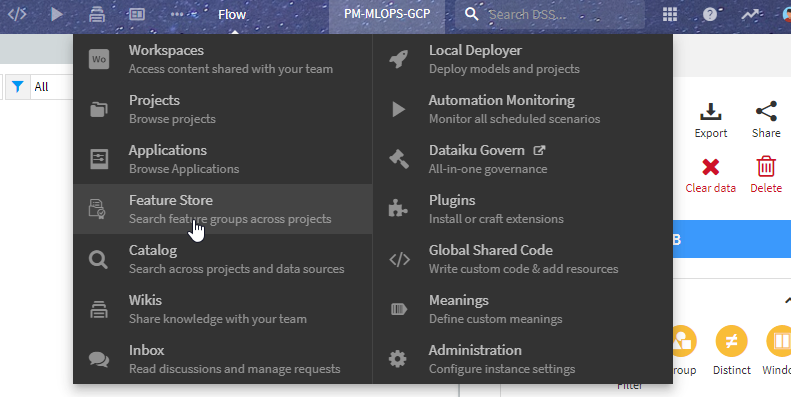

The starting place is to look for feature groups. From the Flow, you can click on + New Dataset > Feature Group or directly navigate to the feature store section of Dataiku from the waffle () menu near the top right.

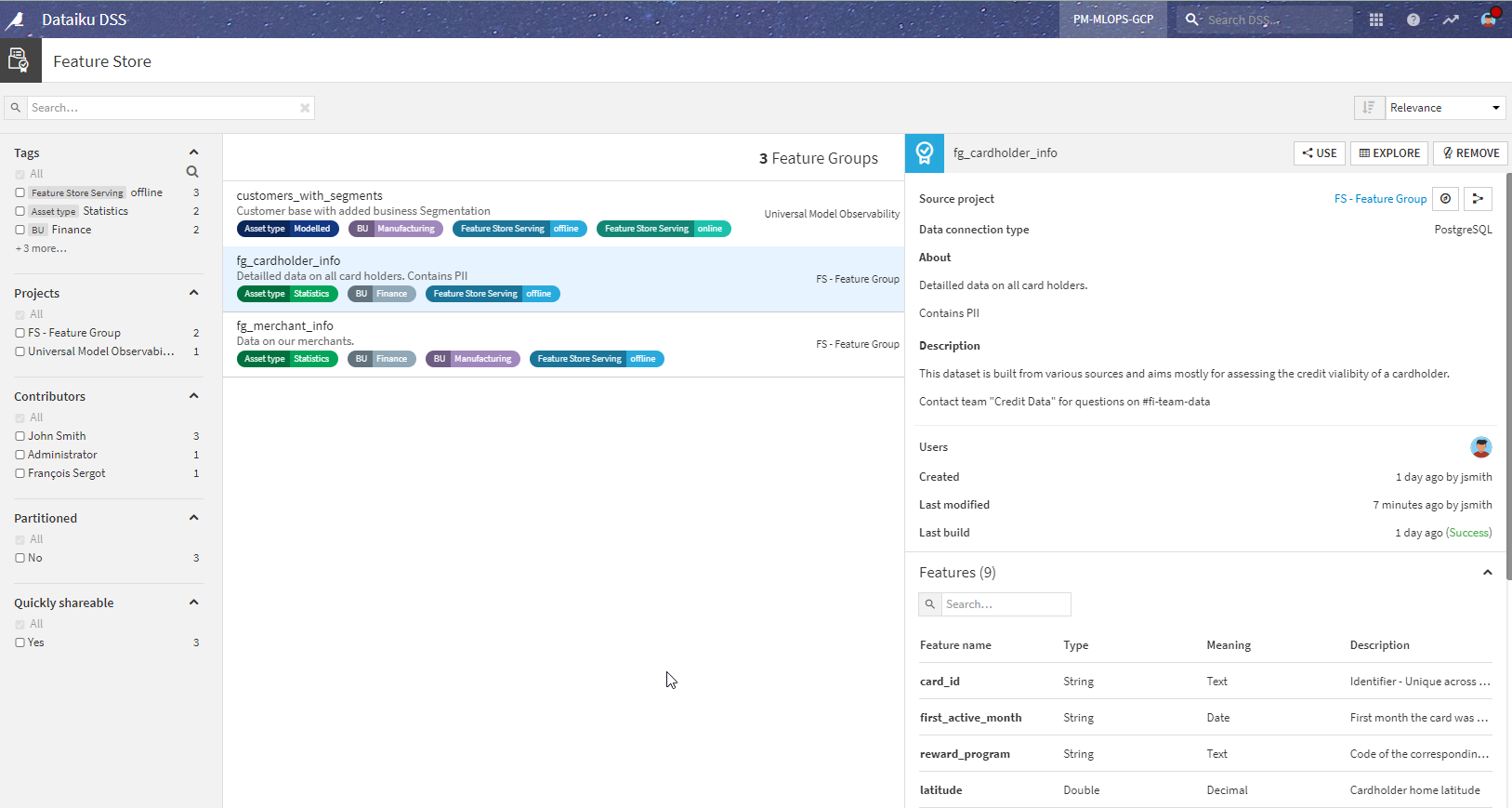

In this place, you will be able to see all feature groups with their details and usage (hence the importance of documenting them). Here we can see the two feature groups we created in the project above.

By clicking on the Use button, these feature groups are made available in your project.

The redesigned project#

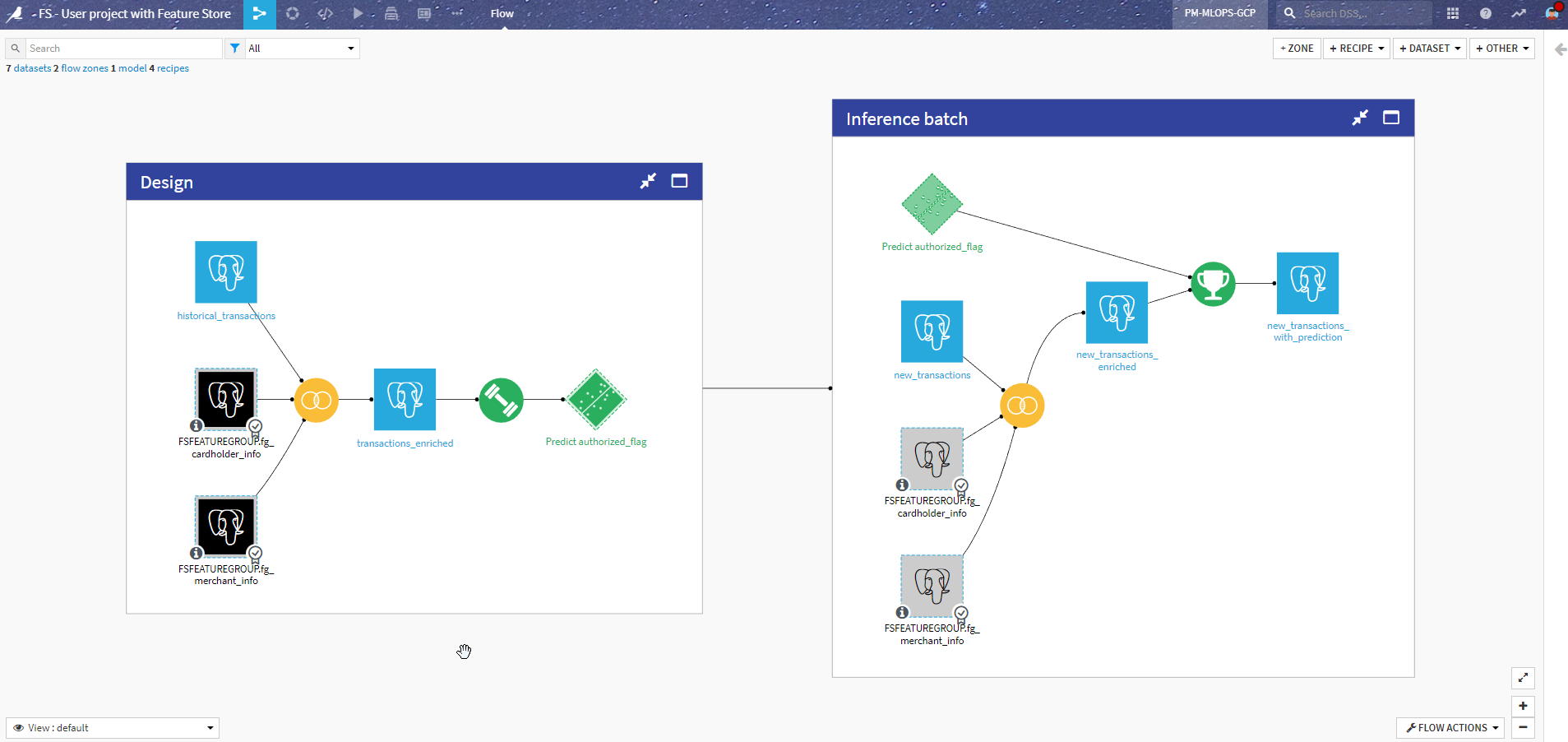

After incorporating the feature groups into the Flow instead of the original data preparation steps, here is how it looks:

Let’s review the changes compared to the previous version.

Design using feature groups#

The most visible change is that we removed the data processing on the cardholder and merchant datasets. We switched to using the feature groups instead, as shared datasets (appearing black in the Flow). Since the schema is the same, this change was pretty straightforward.

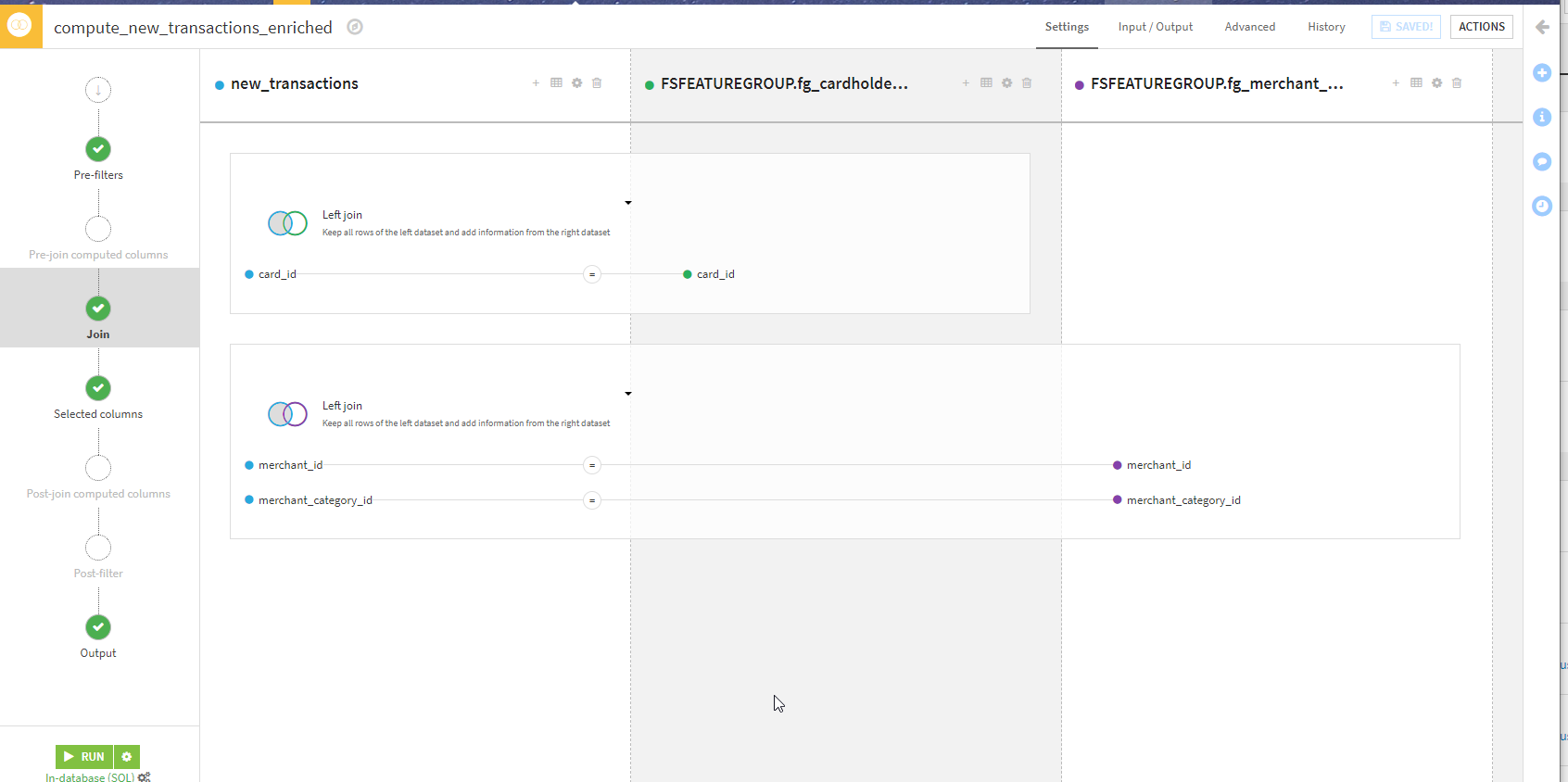

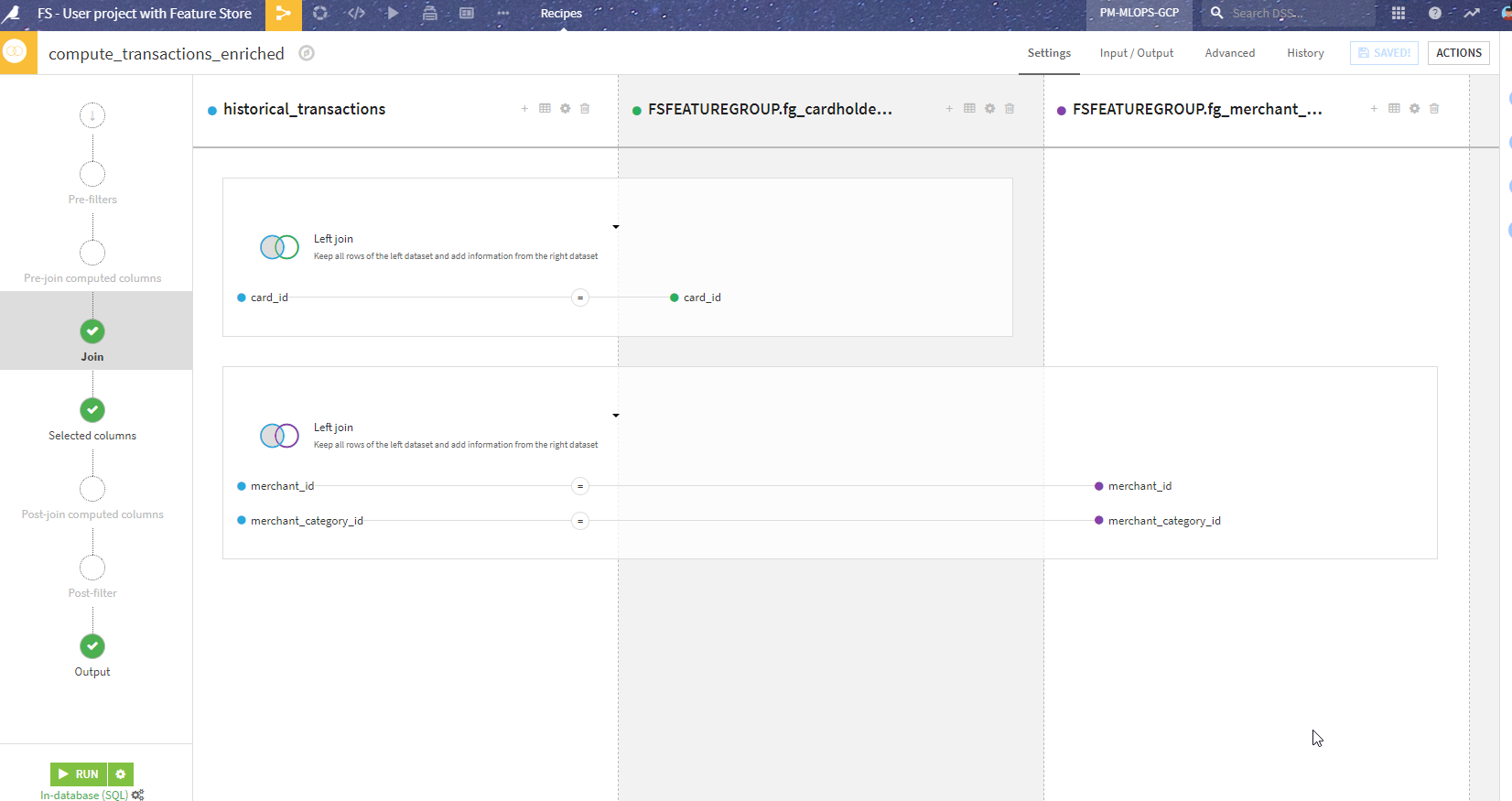

Batch inference using feature groups (offline scoring)#

This is the part in the Inference batch zone where we replace the local datasets with feature groups. This is also pretty seamless and with the same Join as above in the design.

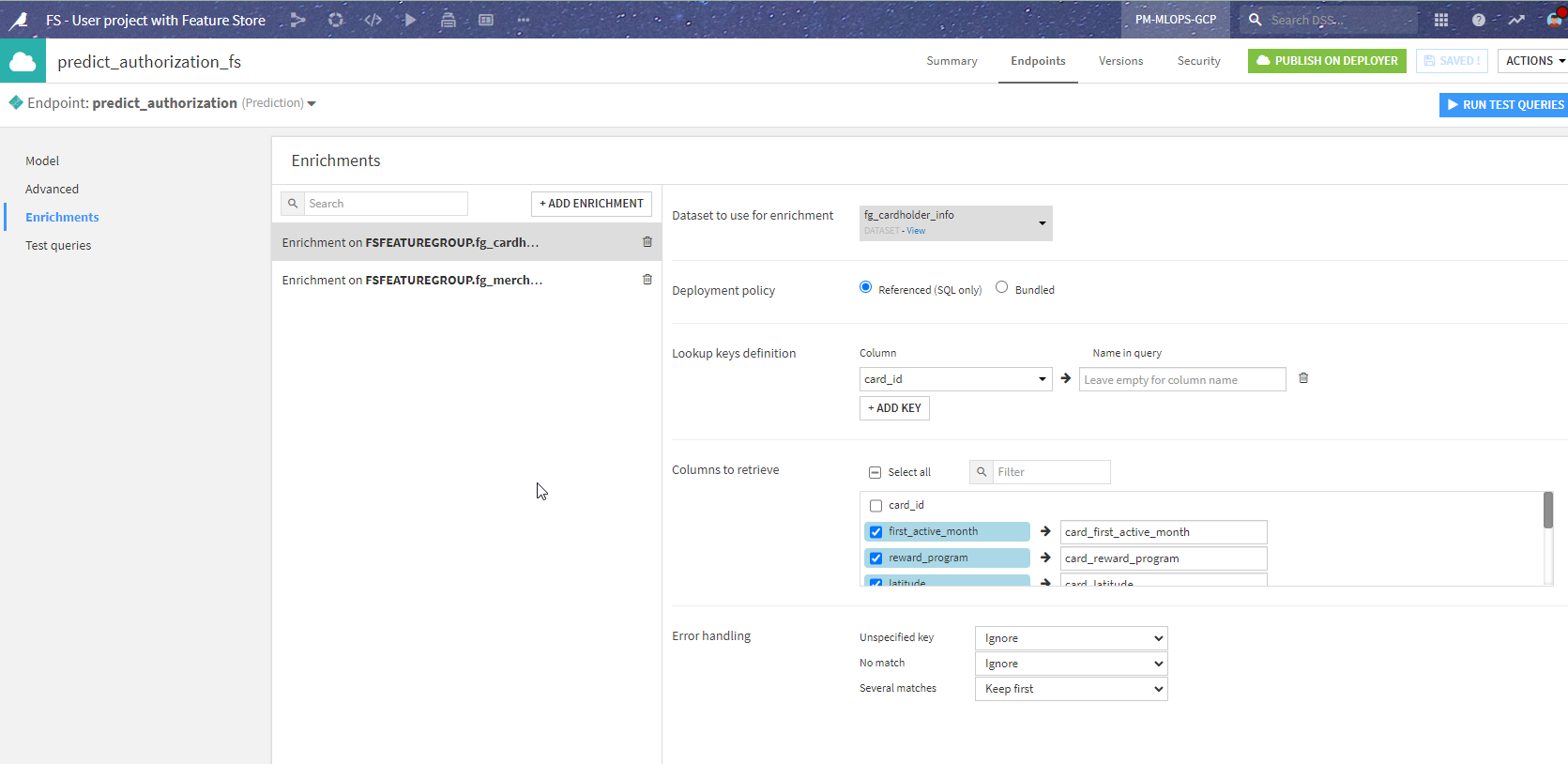

Real-time inference using feature groups (online scoring)#

This one requires some changes in the API endpoint definition, similar to the logic above: replace enrichments from local datasets to enrichments from shared datasets. This is how the endpoint in API Designer now looks:

This gives the same results from the API client perspective once repackaged and redeployed (in just a few seconds, thanks to API Deployer).

Conclusion#

What we’ve seen is that the biggest work is moving the data preparation to a dedicated project, rather than redesigning the project itself using the feature groups.

In the end, we have a simpler project and clearer separation between building the data and using the data for machine learning. Because everything happens in one tool, suitable for both ML engineers and data specialists, there is no complex transition between the two worlds. And you keep the same security, auditing, and governance framework throughout your lifecycle.

Note

For more information, consult the feature store reference documentation.