Concept | Model deployment to the Flow#

Watch the video or read the summary below.

After you have trained a model in the Lab, iterated on its design, and achieved satisfactory results, the next step is to put the model to work by scoring new data.

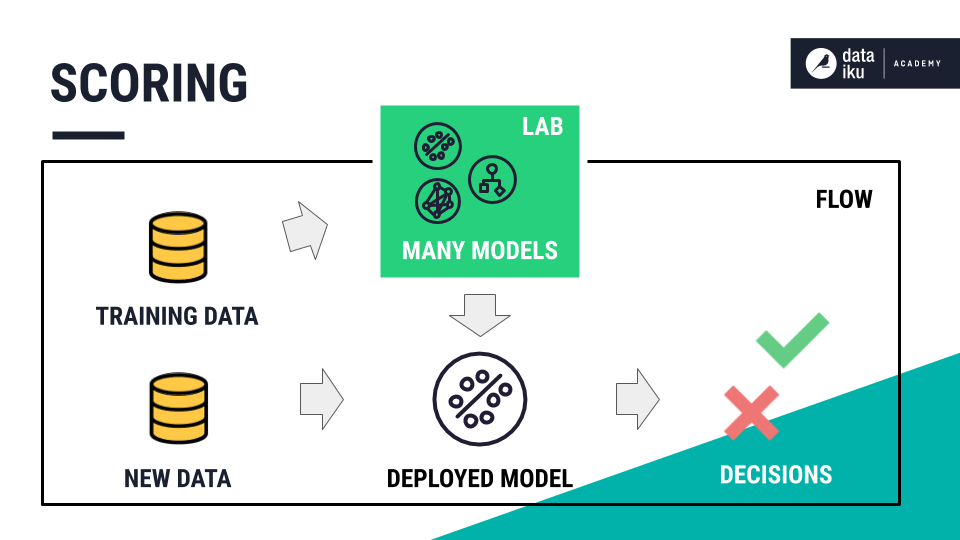

Scoring new data#

This step of the model lifecycle is called scoring. We want to feed the chosen model new data so that it can assign predictions for each new, unlabeled record.

In this case, we want to use one model to predict which new patients are most likely to be readmitted to the hospital.

Deploying a model to the Flow#

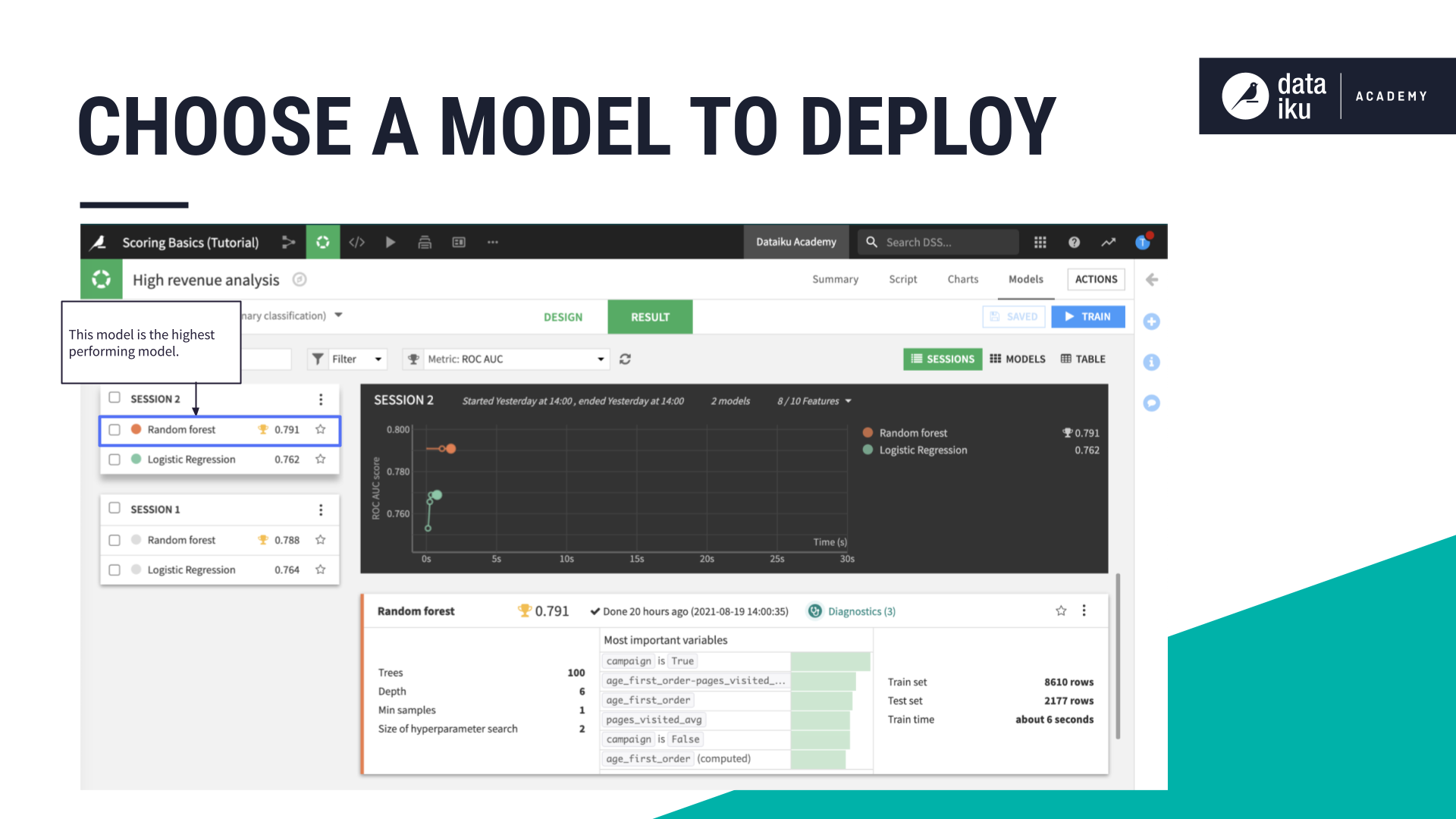

Model training is completed in a visual analysis, which is part of the Lab, a place for experimentation in Dataiku separate from the Flow. To use one of these models to classify new records, you’ll need to deploy it to the Flow.

Which model should you choose to deploy? In the real world, this decision may depend on a number of factors and constraints — such as performance, explainability, and scalability. Here let’s just choose the best performing model.

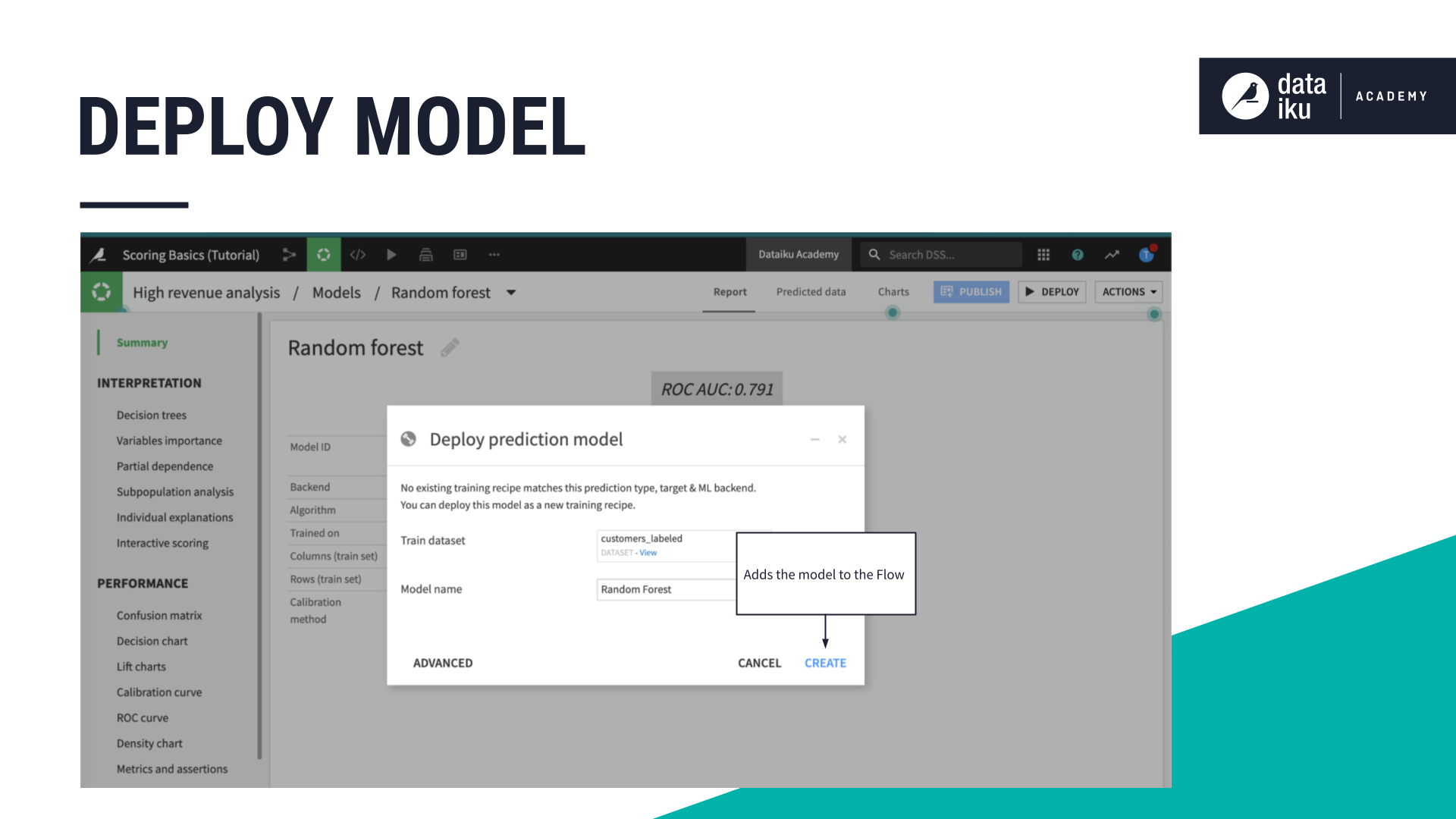

The dialog window for deploying a model is similar to that of other recipes. Like with most recipes, we have an input dataset. Instead of naming the output dataset, we can rename the model. Clicking Create brings the model from the Lab into the Flow where our datasets live.

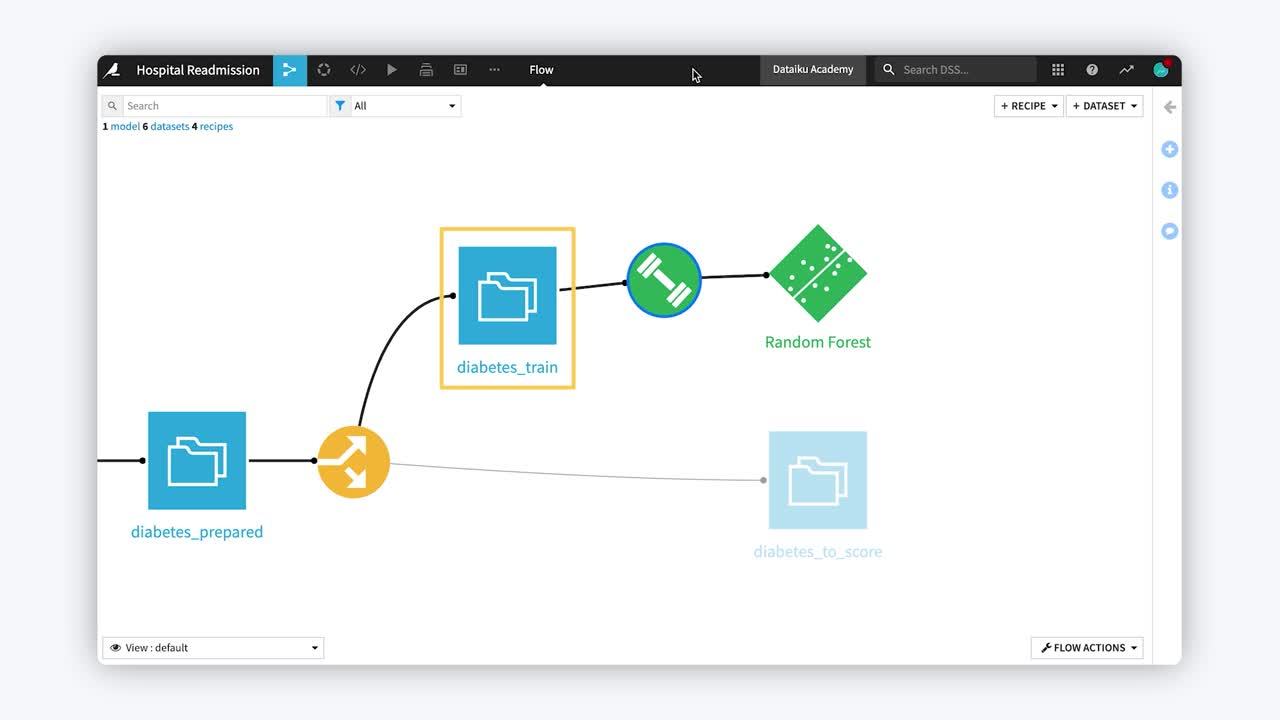

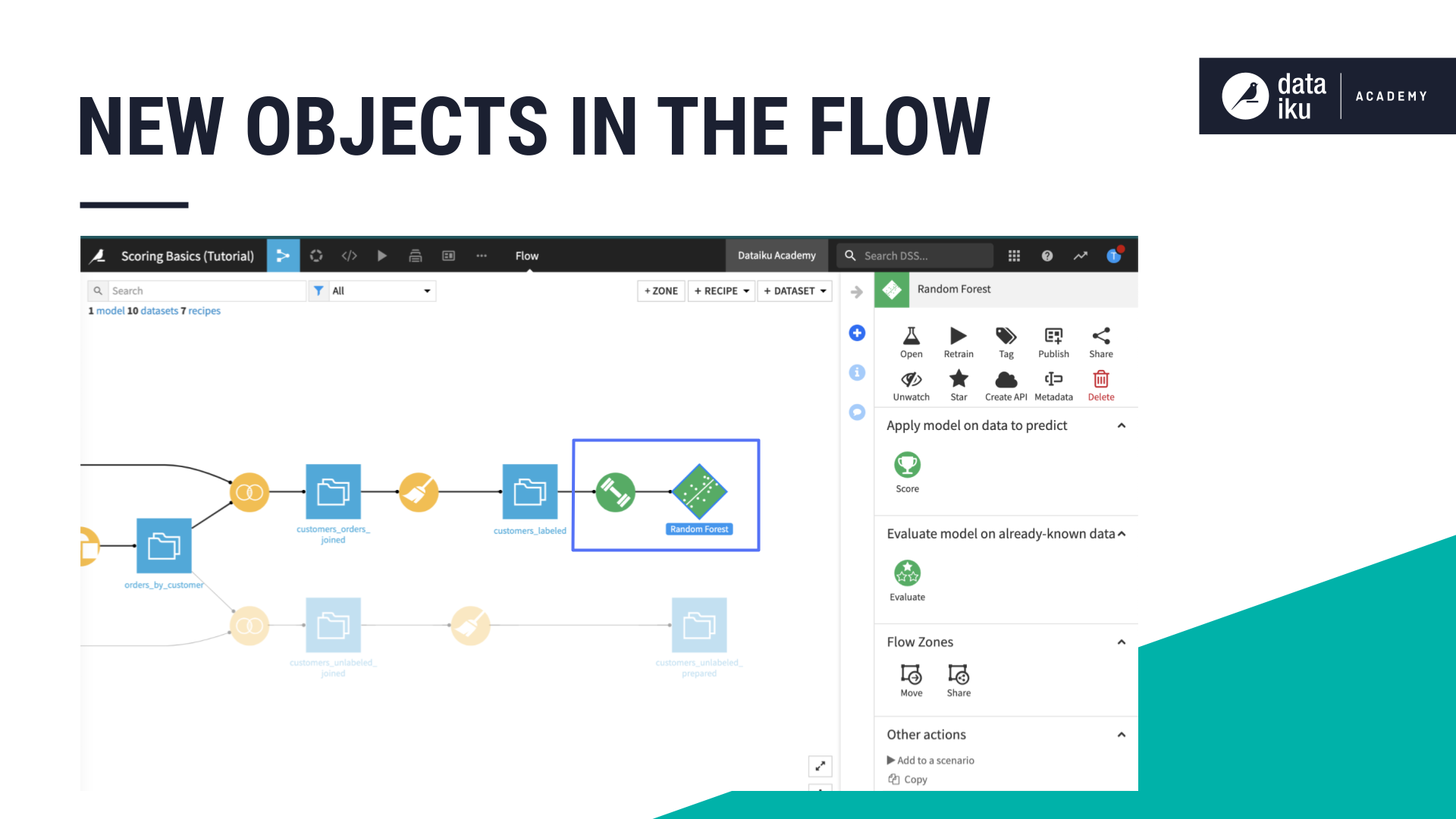

Deploying a model adds two objects to the Flow:

The first is a special kind of recipe, called the Train recipe. Just like other recipes in Dataiku, its representation is a circle. Instead of yellow or orange though, the green color lets us know it’s for machine learning.

Most often, recipes take a dataset as input and produce another dataset as output. The train recipe takes one dataset as its input but outputs a model in the Flow.

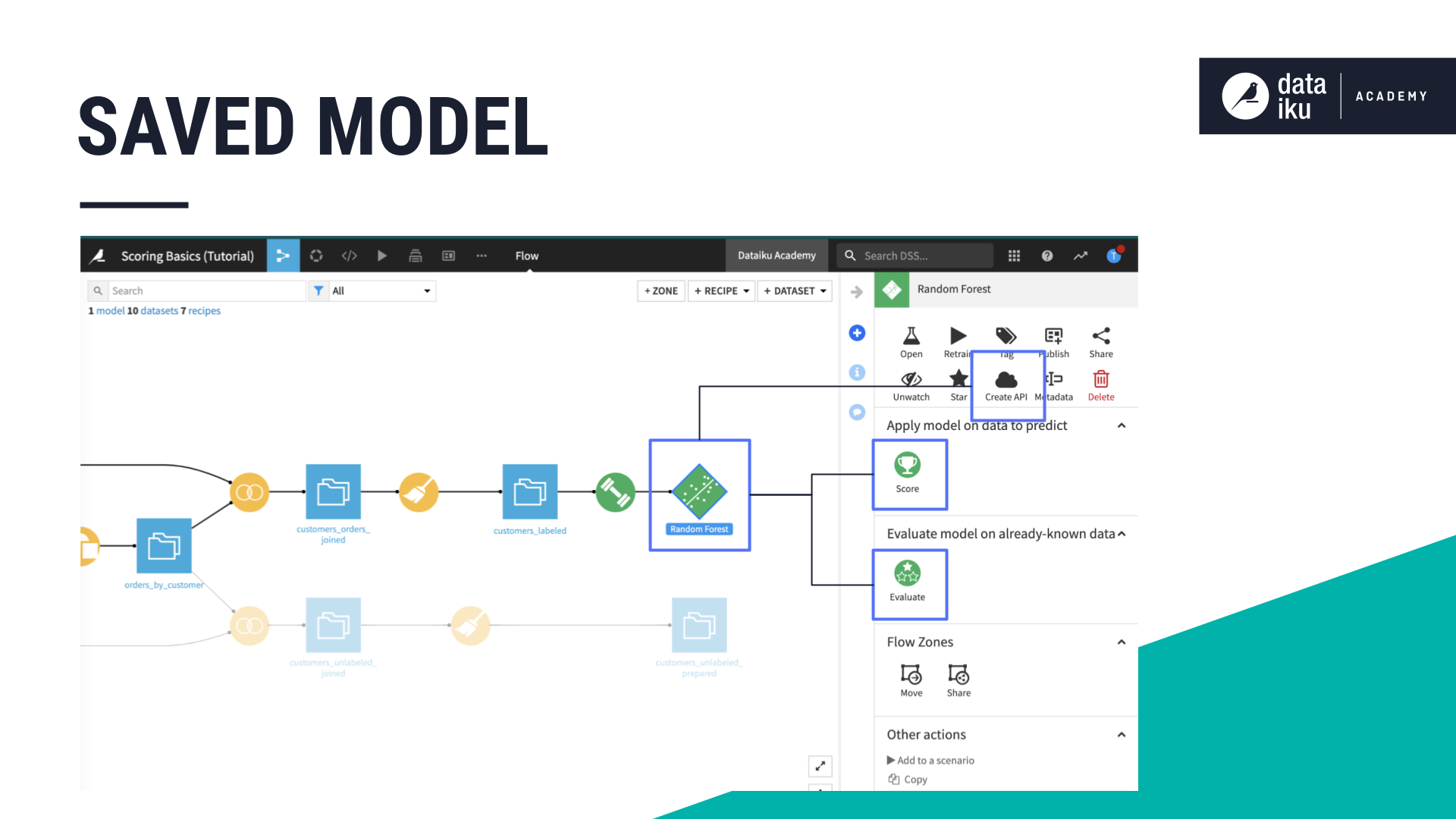

The second object added to the Flow is called a saved model object. Notice we have a new shape in the Flow! A diamond represents a saved model.

A saved model is a Flow artifact that contains the model that was built within the visual analysis. Along with the model object, it contains all the lineage necessary for auditing, retraining, and deploying the model — things like hyperparameters, feature preprocessing, the code environment, etc.

This saved model can be used in several ways:

It can score an unlabeled dataset using the Score recipe.

It can also be evaluated against a labeled test dataset using the Evaluate recipe.

Or it can be packaged and deployed as an API to make real time predictions.

Next steps#

In the article, Concept | Scoring data, we’ll see how to prepare and score new, unlabeled data with a deployed model.