Tutorial | Jenkins pipeline for Dataiku without the Project Deployer#

In this article, we will show how to set up a sample CI/CD pipeline built on Jenkins for our Dataiku project. This is part of a series of articles regarding CI/CD with Dataiku.

For an overview of the CI/CD topic, the use case description, the architecture and other examples, please refer to the Tutorial | Getting started with CI/CD pipelines with Dataiku.

If you are using Dataiku 9 or later, we recommend using Project Deployer to manage your operationalization. In this case, please follow Tutorial | Jenkins pipeline for Dataiku with the Project Deployer.

Note

You can find all the files used in this project attached to this article by downloading the ZIP file.

Introduction#

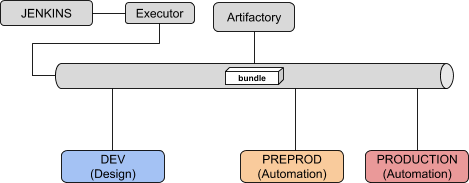

Based on the generic setup described in the introductory article, let’s review the specifics of this use case. Our CI/CD environment will be made of:

One Jenkins server (we will be using local executors) with the following Jenkins plugins:

One Dataiku Design node where data scientists will build their Flows

Two Dataiku Automation nodes, one for Pre-Production and the other Production

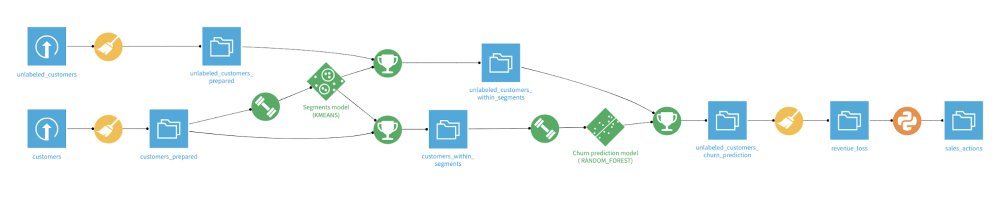

As explained in the introductory article, we will use the Dataiku Prediction churn sample project that you need to have deployed and working on your Design node.

Pipeline configuration#

The first step is to create a project in Jenkins of the Pipeline type. Let’s call it dss-pipeline-cicd.

We will use the following parameters for this project:

Variable |

Value |

Description |

|---|---|---|

DSS_PROJECT |

String |

Key of the project we want to deploy (for example, |

DESIGN_URL |

String |

URL of the Design node (for example, |

DESIGN_API_KEY |

Password |

API key to connect to this node. |

AUTO_PREPROD_URL |

String |

URL of the PREPROD node (for example, |

AUTO_PREPROD_API_KEY |

Password |

API key to connect to this node. |

AUTO_PROD_URL |

String |

URL of the PROD node (for example, |

AUTO_PROD_API_KEY |

Password |

API key to connect to this node. |

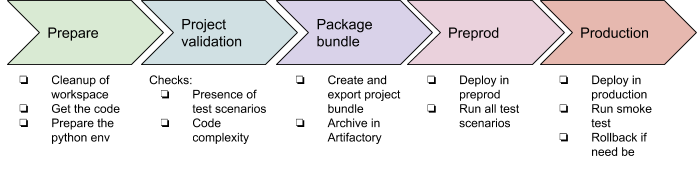

The pipeline contains five stages and one post action:

The post action will be used to clean up the bundle ZIP file from the local Jenkins workspace to save space and will also retrieve all the xUnit test reports.

As additional global notes, we’re using a global variable bundle_name so that we can pass this information from one stage to another. This variable is computed using a shell script with the date & time of the run (the script is explained after it’s displayed).

You can find the groovy file of the pipeline in the ZIP: pipeline.groovy. In this file, you have the definition of the different stages and for each stage the details of the steps.

Let’s review those steps one by one.

PREPARE stage#

This stage is used to build a proper workspace. The main tasks are to get all the CI/CD files we need from your GitHub project and build the right Python3 environment using the requirements.txt file.

PROJECT_VALIDATION stage#

This stage contains mostly Python scripts used to validate that the project respects internal rules for being production-ready. Any check can be performed at this stage, be it on the project structure, setup, or the coding parts, such as the code recipes or the project libraries.

Note that we’re using the pytest capability to use command-line arguments by adding a conftest.py.

This is specific to each installation, but here are the main takeaways:

In this project, we will be using the pytest framework to run the tests and report the results to Jenkins. The conftest.py is used to load our command line options. The run_test.py file includes the actual tests, all being Python functions starting with

TEST_.The checks we have:

If this state is OK, we know we have a properly written project, and we can package it.

PACKAGE_BUNDLE stage#

The first part of this stage is using a Python script to create a bundle of the project and download it locally on the Jenkins executor.

The second part is using a Jenkins stage to publish this bundle on our Artifactory repository generic-local/dss_bundle/. Note that the stage will fail if no file is published (the failNoOp: true option) so there is no need for an extra check.

PREPROD_TEST stage#

In this stage, we’re deploying the bundle produced at the previous stage on our DSS PREPROD instance and then running tests.

The bundle import is done in import_bundle.py and is straightforward: import, preload, activate. In this example, we consider connection mappings are automatically done. If you need specific mappings, this requires some more work using the API.

The following script run_test.py executes all the scenarios named TEST_XXX and fails if a result isn’t a success.

You can check on the blog article why we’re using Dataiku scenarios.

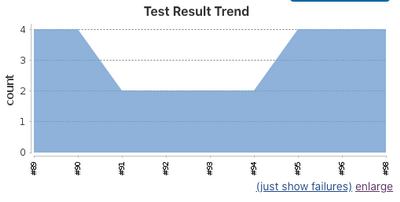

This pytest configuration has an additional twist. If you have only one test running all the TEST_XXX scenarios, they will be reported to Jenkins as a single test — successful or failed.

Here, we make this nicer by dynamically creating one unit test per scenario. In the final report, we will have one report per scenario executed, making the report more meaningful. This requires some understanding of pytest parameterization. Note that you can perfectly keep one test that will run all your scenarios if you aren’t feeling at ease with this.

DEPLOY_TO_PROD stage#

The previous stage verified that we have a valid package. It’s time to move it to production!

Again using Python with script import_bundle.py, we will upload the bundle to the production node using the same logic as in PREPROD.

The second script prod_activation.py will handle the activation and the rollback. For that, here are the main steps:

Get the current active bundle. Note we need to iterate through the projects to find the indication.

The uploaded bundle is preloaded and then activated within a try statement. Since the activation is an atomic operation, if this fails, we have nothing to do.

To ensure the new bundle is working, we execute the TEST_SMOKE scenario.

If TEST_SMOKE execution fails, we perform the rollback by re-activating the previous bundle.

Post actions#

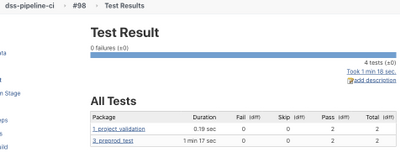

The Post Actions phase allows us to clean locally downloaded ZIP files and publish all test xUnit reports in Jenkins to have a nice test report. Those reports were produced all along the pipeline by pytest and are here aggregated into a single view to have something like:

Sample usage#

Now we have seen a step-by-step demonstration of how to build a solid CI/CD pipeline with Jenkins. If you want to use this and adapt it to your setup, here is a checklist of what you need to do:

Have your Jenkins, Artifactory, and Dataiku nodes installed and running.

Make sure to have Python 3 installed on your Jenkins executor.

Get all the Python scripts for the project and put it in your own source code repository.

Create a new pipeline project in your Jenkins:

Add the variables as project parameters and assign them a default value according to your setup.

Copy/paste the pipeline.groovy as Pipeline script.

In the pipeline, setup your source code repository in the PREPARE stage.

Hit Build with parameters.

Ideas for improvement#

You can improve this startup kit, and here are some ideas:

Define a trigger for your pipeline:

A Time trigger, to run the pipeline every day, for example.

A Jenkins GUI manual trigger where users connect to Jenkins and trigger the job.

An API trigger, by calling Jenkins from a Dataiku webapp, scenario, or a macro (using Generic Webhook Trigger (Jenkins plugin) or Remote Access API).

You can also add a manual sign-off in this process if you aren’t confident. The easiest way is to use the Jenkins manual input step.

You can add as many test scenarios as possible that will ensure a reliable continuous deployment.