Tutorial | SQL from a Python recipe in Dataiku#

Get started#

SQL is the most pervasive way to make data analysis queries. However, writing advanced logic like loops and conditions is often difficult in SQL. There are some options like stored queries, but they require learning new languages.

Dataiku lets you run SQL queries directly from a Python recipe. This lets you:

Sequence several SQL queries.

Dynamically generate new SQL queries to execute in the database.

Use SQL to obtain some aggregated data for further numerical processing in Python.

Objectives#

In this tutorial, you will:

Run a query in an SQL notebook.

Use Python to generate a more complex SQL query.

Execute an SQL query in-database from a Python recipe.

Prerequisites#

Dataiku 12.0 or later.

An Advanced Analytics Designer or Full Designer user profile.

Any supported SQL database configured in Dataiku.

Knowledge of Python and SQL.

Create the project#

From the Dataiku Design homepage, click + New Project.

Select Learning projects.

Search for and select SQL in Python.

If needed, change the folder into which the project will be installed, and click Create.

From the project homepage, click Go to Flow (or type

g+f).

From the Dataiku Design homepage, click + New Project.

Select DSS tutorials.

Filter by Developer.

Select SQL in Python.

From the project homepage, click Go to Flow (or type

g+f).

Note

You can also download the starter project from this website and import it as a zip file.

Use case summary#

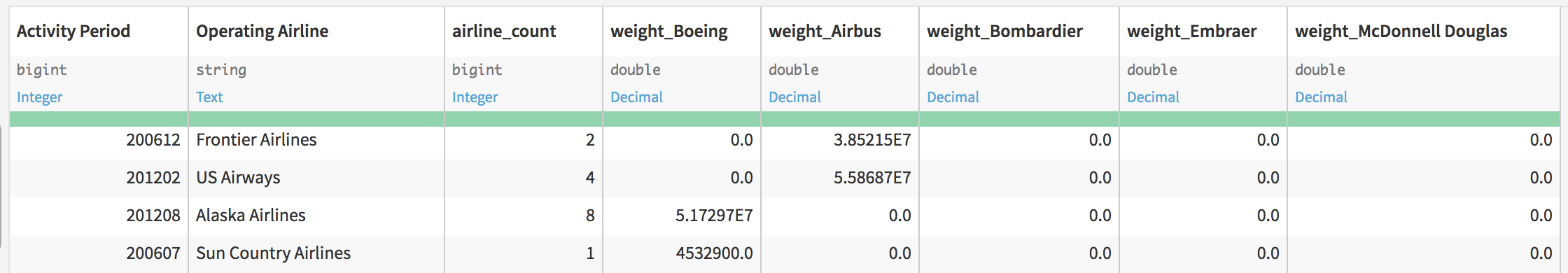

The sfo_monthly_landing_data dataset records the number of cargo landings and total landed cargo weight at the SFO airport. From 2005 to 2015, the dataset contains one record for each month, airline, type and details of aircraft. The line includes the number of aircraft of this type of this company that landed this month, and the number of records.

What we would like to do is write a recipe to obtain, for each month and airline, a breakdown in several columns of the total landing weight by aircraft manufacturer. In essence, that’s a kind of crosstab / pivot table.

To make things a bit more complex, we’re not interested in the small aircraft manufacturers. We only want the top 5 manufacturers, meaning those with the highest count of landings.

With these constraints, doing that in SQL only would be complex. Let’s do it with a little bit of Python calling SQL.

Sync data to the database#

Your Dataiku project has the source data in a filesystem (or uploaded) dataset. As this is an import from a CSV file, Dataiku has assigned only string types to the input columns. If you go to the Settings > Schema tab of the dataset, you’ll see the columns declared as string.

We could set the types manually in the dataset, but we could also let the Prepare recipe do it. Since anyway we need to copy our dataset to an SQL database, using a Prepare recipe instead of a Sync recipe will give us the typing inference for free.

Create a Prepare recipe from the sfo_monthly_landing_data dataset

Choose to store the output in your SQL database connection.

Name the output dataset

sfo_prepared.Click Run since no preparation steps are needed.

Note

We have additional resources on syncing data to a database using the Sync or Prepare recipes.

Experiment in an SQL notebook#

To create the column per manufacturer, we’re going to use the CASE expression. For example, let’s use an SQL notebook to calculate the total landing weights of Airbus planes.

Create a new SQL notebook on the sfo_prepared dataset.

In the Tables tab of the left hand panel, click the + next to the sfo_prepared dataset to copy a query that selects all columns and rows from this table.

Adjusting the table name if needed, copy and Run the query below that sums the weight of each row if it’s Airbus.

SELECT

SUM(CASE

WHEN "Aircraft Manufacturer" = 'Airbus' THEN "Total Landed Weight"

ELSE 0

END) AS airbus_weight

FROM "DKU_TUTORIAL_SQLINPYTHON_sfo_prepared"

Note

Be sure to recognize the correct reference to your table name. Run on a local PostgreSQL connection, the full table name is "DKU_TUTORIAL_SQLINPYTHON_sfo_prepared". However, if using a managed Snowflake connection on Dataiku Cloud, for example, the complete table name would include instance information. You’ll need this table name for the remainder of the tutorial.

Your project key might also differ if projects of the same name already existed when creating the project.

Run your first SQL query from a Python notebook#

Now let’s have Python send an SQL query that creates a pandas DataFrame of the top five manufacturers.

From the sfo_prepared dataset, create a new Python notebook.

Replace the starter code with the code below.

Adjust the table name as needed depending on your instance, and then Run the cell.

# -*- coding: utf-8 -*-

import dataiku

import pandas as pd, numpy as np

from dataiku import pandasutils as pdu

# Import the class that allows us to execute SQL on the Studio connections

from dataiku import SQLExecutor2

# Get a handle on the input dataset

sfo_prepared = dataiku.Dataset("sfo_prepared")

# We create an executor. We pass to it the dataset instance. This way, the

# executor knows which SQL database should be targeted

executor = SQLExecutor2(dataset=sfo_prepared)

# Get the 5 most frequent manufacturers by total landing count

# (over the whole period)

mf_manufacturers = executor.query_to_df(

"""

SELECT

"Aircraft Manufacturer" as manufacturer,

SUM("Landing Count") as count

FROM "DKU_TUTORIAL_SQLINPYTHON_sfo_prepared"

GROUP BY "Aircraft Manufacturer"

ORDER BY count DESC

LIMIT 5

""")

# The "query_to_df" method returns a pandas DataFrame that

# contains the manufacturers

Feel free to print out and inspect the mf_manufacturers DataFrame created from this code.

Note

You can learn more in the Developer Guide about performing SQL queries with the Python API.

Create the final SQL query with Python#

Now that we have a DataFrame with the top manufacturers, let’s use a loop to generate a Python list of these pesky CASE WHEN expressions.

In a new cell at the end of the same Python notebook, copy and Run the following:

cases = [] for (row_index, manufacturer, count) in mf_manufacturers.itertuples(): cases.append( """ SUM(CASE WHEN "Aircraft Manufacturer" = '%s' THEN "Total Landed Weight" ELSE 0 END) AS "weight_%s" """ % (manufacturer, manufacturer))

We can now use this list of case expressions to create the final SQL query.

In a new cell at the end of the same Python notebook, copy and Run the following (again adjusting the table name as necessary):

final_query = """ SELECT "Activity Period", "Operating Airline", COUNT(*) AS airline_count, %s FROM "DKU_TUTORIAL_SQLINPYTHON_sfo_prepared" GROUP BY "Activity Period", "Operating Airline" """ % (",".join(cases)) print(final_query)

Build the output dataset#

To finish, we need to execute the query with a Python recipe.

Click + Create Recipe > Python recipe > OK.

Add a new output dataset named

sfo_pivot.Click Create Dataset and Create Recipe.

Replace the default output at the end of the recipe with the code below.

result = executor.query_to_df(final_query) output_dataset = dataiku.Dataset("sfo_pivot") output_dataset.write_with_schema(result)

Click Run.

Visualize the output#

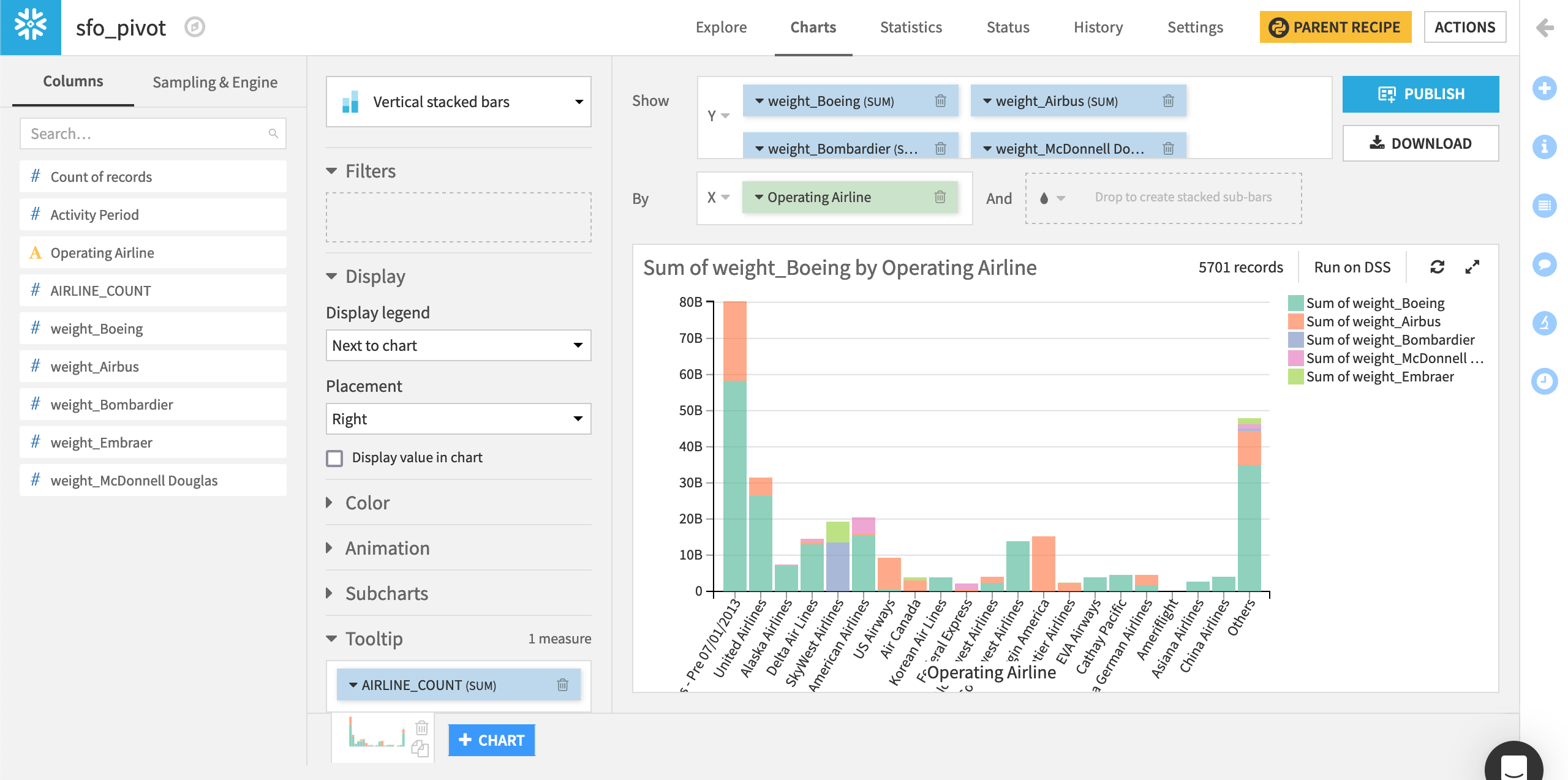

The output is exactly what we wanted.

Here is a vertical stacked bar chart with the sum of the weights of the top five manufacturers in the Y field and the operating airlines in the X field, sorted by descending airline counts.

Fairly unsurprisingly, Boeing is dominant, and most airlines are mono-carrier. However, United Airlines has a non-negligible Airbus cargo fleet.

Execute in-database#

In this first version, we executed both queries using query_to_df, meaning that the Python code actually received the whole data in memory, and sent it back to the database for storing the output dataset.

It would be better (and critical in the case of big datasets) that the final query be performed fully in-database.

Fortunately, Dataiku makes that easy. Executing SQL queries in-database and handling the work of dropping/creating table is what the SQL query does. The SQLExecutor2 class lets you run a query as if it was an SQL Query recipe.

Return to the Python recipe.

Replace the final three lines of the code by the code block below.

output_dataset = dataiku.Dataset("sfo_pivot") SQLExecutor2.exec_recipe_fragment(output_dataset, final_query)

Click Run.

Everything works the same, but now the data hasn’t been streamed to Python. Everything stayed in the database!