Concept | LLM connections#

Large language models are what make Generative AI possible. To use GenAI in Dataiku, you must first connect to a service providing access to LLMs.

Once an administrator sets up connections that make LLMs available to your organization, you can leverage LLMs from this connection with Dataiku’s:

Agents, to power the agent’s tasks.

LLM visual recipes, such as the Prompt recipe, text processing recipes (summarization, classification), and the Embed recipe for Retrieval-Augmented Generation (RAG).

LLM-as-a-judge capabilities in LLM evaluation, agent evaluation, and Agent Review.

Python API for usage in code recipes, code studios, notebooks, and webapps.

Adding a connection#

Users with administrator rights can add and manage LLM connections in the connections settings of an instance. The settings location varies slightly depending on the instance type.

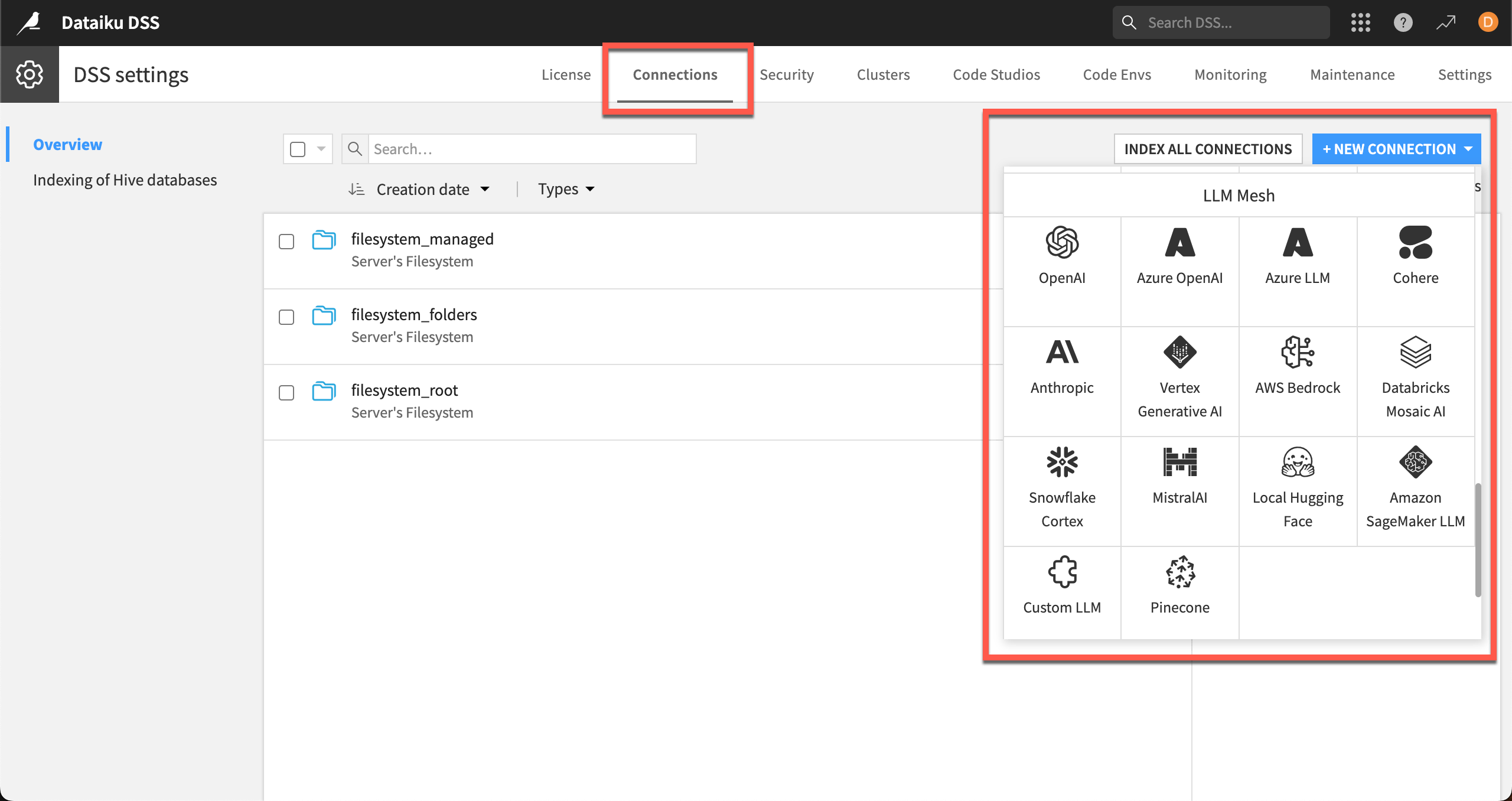

For self-managed instances, settings are in the waffle menu () > Administration > Connections > + New Connection > LLM Mesh.

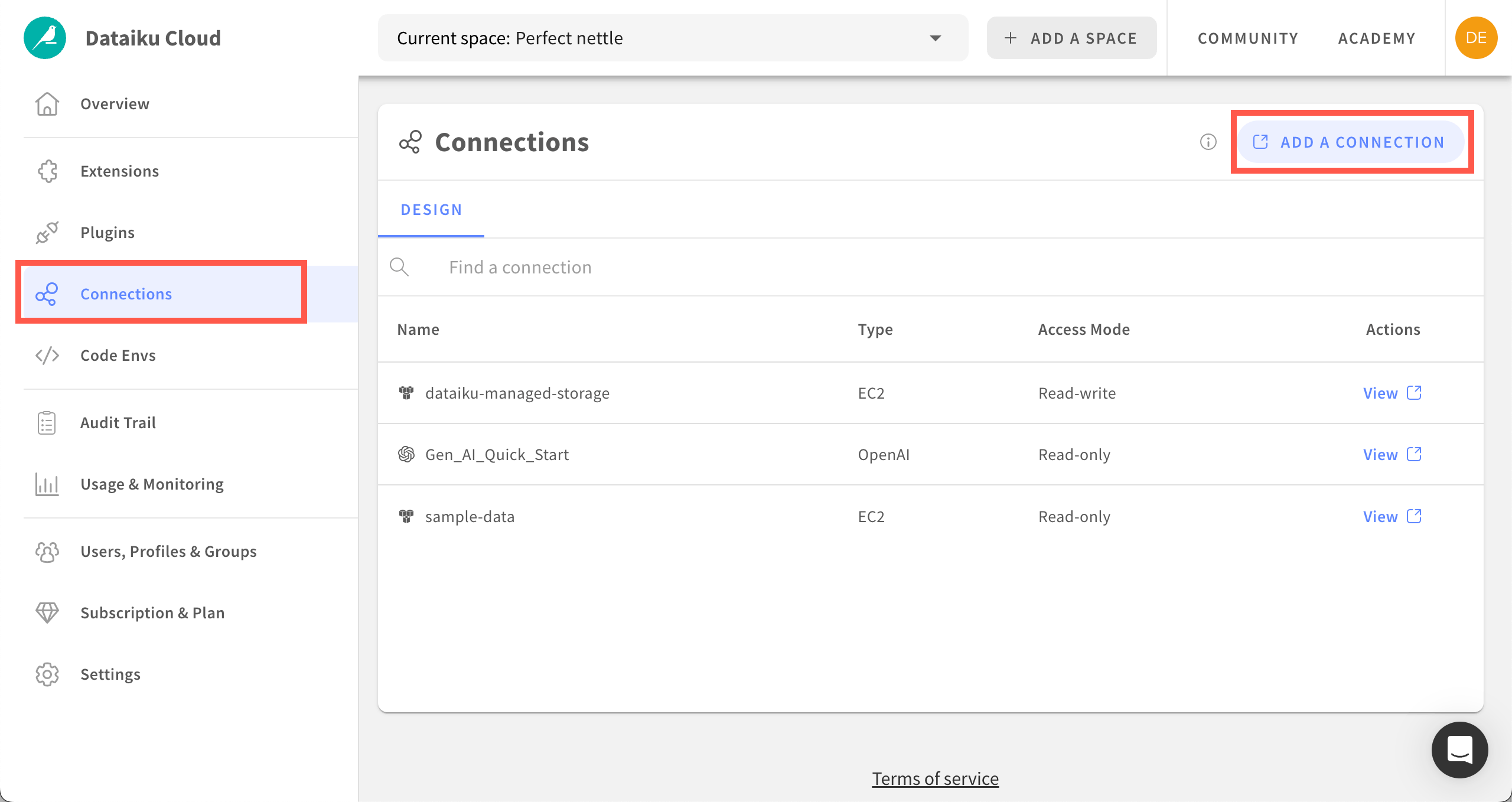

Dataiku Cloud connection settings are in the Launchpad left panel > Connections > Add a Connection > LLM Mesh.

Types of connections#

LLMs are either provided as a service or as objects that your organization must manage. Your organization might decide to work with multiple providers, commercial services, or open-source models.

Built-in connections#

Dataiku provides connections out-of-the-box for both commercial services and open-source model providers. Connection requirements vary by provider.

Supported connections to GenAI services include:

Commercial services such as OpenAI, Anthropic, AWS Bedrock, Snowflake, etc.

Hugging Face for open-source models.

Note

For open-source models, Dataiku provides serving capabilities, including containerized execution and integration with NVIDIA GPUs. Administrators only have to configure those capabilities, simplifying management of the serving infrastructure.

Custom connections#

You can also develop custom LLMs, or connect to your internal custom gateway, and use them via a custom LLM connection.

See also

For more details on the types of LLM connections and instructions to set them up, see the reference documentation.